When analyzing data, raw input data often contains many errors, omissions and inconsistencies to serve the analysis process. This is when the data cleaning process will be necessary. So how to clean up data effectively? In this article, DIGI-TEXX will go from detailed instructions through 7 essential steps from identifying and removing duplicate records, handling missing data, to standardizing formats and building a sustainable data quality strategy.

>>> See more:

- Administrative Support Outsourcing: 2026 Complete Guide

- Ecommerce Back Office Support Services By DIGI-TEXX

- Professional E-commerce Data Entry Services by DIGI-TEXX

- Secure Data Annotation Services: Top Companies & How to Choose

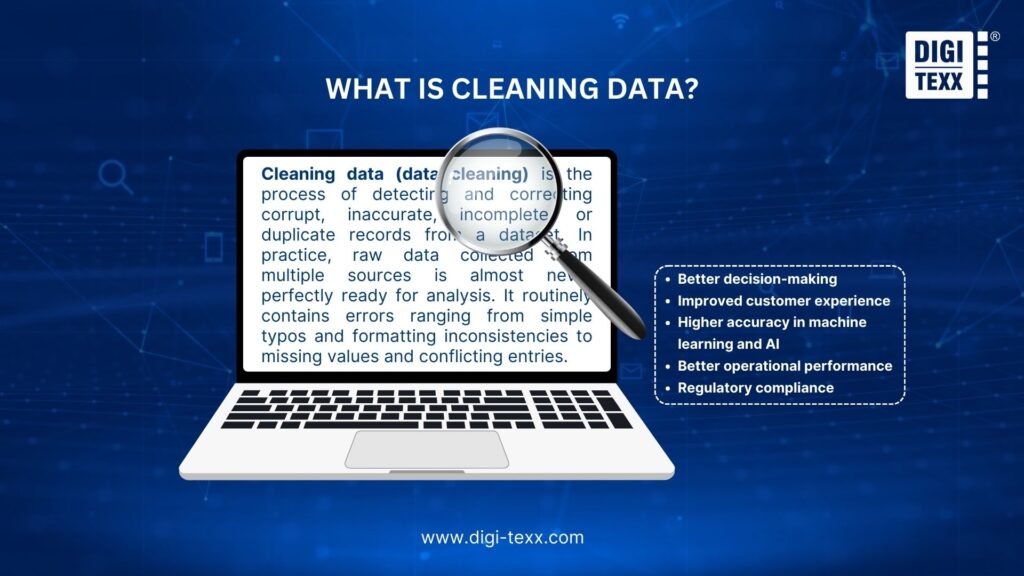

What Is Cleaning Data?

Cleaning data (data cleaning) is the process of detecting and correcting corrupt, inaccurate, incomplete, or duplicate records from a dataset. In practice, raw data collected from multiple sources is almost never perfectly ready for analysis. It routinely contains errors ranging from simple typos and formatting inconsistencies to missing values and conflicting entries.

Data cleaning is the foundational step that transforms this messy raw data into a reliable, structured resource suitable for analysis, reporting, and machine learning.

A widely accepted principle in data analytics is “garbage in, garbage out.” If the data fed into an analytical model is incorrect or inconsistent, the insights and decisions derived from it will be equally flawed, no matter how sophisticated the algorithm. This is why data scientists and analysts regularly spend a significant proportion of their time on data cleaning before they begin any actual analysis.

>>> See more:

- Insurance Back Office Support Services for Insurers & Agencies

- Data Labeling Service: Benefits, Top Providers & How to Choose

- Back Office Support Services For Streamlined & Efficient Operations

Why Is Data Cleaning Important?

Cleaning data is a foundational step in any data-driven strategy. Raw data collected from multiple sources almost always contains errors, duplicates, and inconsistencies that silently distort analysis and lead to costly business mistakes. Below are the key reasons why cleaning data is critical for every organization:

- Enables accurate decision-making: Clean data ensures that reports, dashboards, and forecasts reflect reality. Organizations operating on dirty data, with duplicate records, outdated entries, or structural errors, risk making misguided strategic decisions, wasting budgets, and missing market opportunities.

- Boosts team productivity: When data is clean, employees spend less time manually verifying information or correcting errors, and more time on high-value analysis. The cleaner the data, the faster an organization can act on new information and market changes.

- Reduces operational costs: Poor data quality creates measurable financial losses: redundant customer communications, inventory miscounts, billing errors, and more. Proactively cleaning data prevents these downstream failures and the resources required to fix them.

- Supports regulatory compliance: Under regulations such as GDPR and CCPA, organizations are required to keep personal data accurate, current, and free from unnecessary duplicates. Cleaning data helps ensure compliance, reduces the risk of legal penalties, and limits data exposure in the event of a security breach.

- Powers AI and machine learning: Machine learning models rely on high-quality data. Errors like mislabeled categories, missing values, or duplicates can lead to biased or unreliable predictions, making data cleaning a crucial first step in any AI project.

Benefits Of Cleaning Data

Investing time and resources into cleaning data delivers significant returns across the entire organization. The key benefits include:

Better Decision-Making

With accurate and up-to-date information, businesses can analyze trends, forecast future performance, and make strategic decisions with confidence. When data is free from errors and inconsistencies, it enhances the reliability of analytical models and business intelligence tools.

Improved Customer Experience

Clean customer data enables personalized and targeted communication. By eliminating duplicate records and correcting errors, businesses can create more effective marketing campaigns, deliver personalized content, and improve customer satisfaction.

Higher Accuracy In Machine Learning And AI

Clean, accurate data is critical for training machine learning models. Poor training datasets lead to erroneous predictions in deployed models. This is a primary reason data scientists devote such a large share of their time to the data preparation phase.

Better Operational Performance

Clean, high-quality data helps organizations avoid inventory shortages, delivery errors, and other operational problems that result in higher costs, lower revenue, and damaged customer relationships.

Regulatory Compliance

Cleaning data helps ensure that information meets regulatory standards such as GDPR and CCPA. By removing duplicates and correcting incomplete records, businesses avoid using data that violates privacy laws, supporting transparency and accountability.

7 Steps For Effective Data Cleaning

Cleaning data is a systematic process, not a one-time fix. The following 8 steps form a logical sequence, each building on the last, to transform raw, messy data into a reliable foundation for analysis and decision-making.

Step 1. Identify And Remove Duplicate Data Entries

Duplicate records are one of the most common issues in large datasets. They inflate metrics, distort analysis results, and waste storage. The key is identifying records that represent the same real-world entity, even when they differ slightly in spelling or format.

For small datasets, sorting by key fields (such as customer ID or email) and manually reviewing adjacent rows may suffice. For larger datasets, tools using fuzzy matching algorithms can surface near-identical records automatically. Once identified, duplicates should be merged into a single, authoritative record using clear, documented rules for which values to retain.

Systematically eliminating duplicates ensures each entity is represented only once, creating a clean and reliable database for all subsequent data mining activities.

>>> See more:

- Document Indexing Services For Efficient Search & Data Access

- Outsourced Order Processing Services – Fast & Accurate

- Intelligent Document Processing Services: How It Works & Business Benefits

Step 2. Correct Structural Errors In Data

Structural errors are errors related to the way data is formatted. They are generally not as obvious as duplicate or missing data, but can still cause major problems for data analysis and data automation.

Common structural errors include:

- Inconsistent class or category names: For example, in the same column “Marital Status”, values such as “Single” and “Unmarried” may appear. With these errors, the computer language will understand that these are three completely different information categories.

- Typical errors and formatting inconsistencies: “US”, “us”, and “United States” may all refer to the same location but are treated as separate values.

- Non-standard conventions: Input data may be named randomly, making it difficult to recognize and use the data.

To fully address these errors, a rigorous testing and standardization process is required. The first step is to review the overall data structure, checking the values in categorical columns for inconsistencies. Data cleaning tools can help automate this process by listing all variations and their frequency of occurrence.

Once errors have been identified, the next step is to apply rules to correct them:

- Standardize the data representation: Convert all text data to the same format (e.g., lowercase or uppercase).

- Map inconsistent values to a standard term: For example, all variations of “Single” would be converted to a single value.

- Correct common spelling errors: Use spell checkers or fuzzy matching algorithms to identify and correct typos.

Step 3. Handle Missing Data Effectively

Missing data or “null” values are extremely common in data analysis and can skew the final results. There are three ways to handle missing data:

- Delete: The simplest method is to delete records or entire columns containing missing values. Use this method with caution, as deleting too much data can cause loss of information and skew the output.

- Imputation: A popular method is to estimate mean & median values to fill in missing values.

- Simple Imputation: Use the mean, median, or mode of the column. This method is quick and easy to implement, but it can reduce variability in the data.

- Advanced Imputation: Use algorithms like K-NN (filling in information based on similar records) or machine learning models to get more accurate results, this method will be time consuming.

- Retain: In some cases, it makes sense not to have data (for example, a user without a second phone number cannot fill in the Phone Number 2 field). In such situations, it is best to either keep the value as “null” or create a specific data category such as “No Data”.

>>> Explore more:

- Healthcare BPO Services For Revenue Cycle & Claims Management

- How To Prepare a Classified Balance Sheet: Template & Example

- Best Business Process Automation Solutions & Tools 2026

Step 4. Standardize Data Formats Across Datasets

When data is collected from multiple sources, inconsistent formats (such as dates or units of measurement) are inevitable. When this data is entered for analysis, it will cause discrepancies. Therefore, the data normalization step is needed, this normalization process includes:

- Data type normalization: Ensure that each column has the appropriate data type (e.g., number, text, date).

- Unit normalization: Convert all measurement values to a single unit (e.g., all to pounds or inches).

- Text normalization: Must be consistent in writing, remove extra spaces, and apply formatting rules.

To do this, it is necessary to unify a common rule before proceeding with data analysis as well as an automated script that will apply these rules to the entire collected data set.

Standardization not only helps analytical algorithms work accurately, but also makes it easier for humans to combine, compare, and create reports from standard and more realistic data.

Step 5. Validate Data Accuracy And Consistency

After dealing with duplicate data, missing data, and formatting errors, the next step in how to clean up data is data validation. Data validation is the process of checking whether data is logical, accurate, and consistent in the context in which it was collected. This step helps detect more subtle errors that may have been missed by the previous steps.

Data validation includes several different types of checks:

- Range Checks: This step ensures that numeric values fall within a reasonable range. For example, a person’s age cannot be negative or greater than 150. Percentages must be between 0 and 100. Setting up range rules for each field helps quickly detect data entry errors or system errors.

- Constraint Checks: Data must adhere to logical and business rules. For example, a delivery date cannot be earlier than the order date. A customer classified as “Single” cannot have a spouse.

- Cross-field Checks: Check the logical relationships between different columns in the same data record. For example, the sum of the item details in an order must equal the total value of that order. City, state, and zip code must match.

- Uniqueness Checks: Ensure that values in an identifier column (such as customer ID, order number) are unique and not duplicated.

>>> See more:

- What Is Business Process Outsourcing (BPO)? Definition & Benefits

- Invoice Reconciliation Process Steps | DIGI-TEXX

- Construction Invoice Reconciliation: Best Practices, Software & Outsourcing

Step 6. Identify And Manage Outliers

Outliers are data points that have extremely high or low values compared to other values in the data file. Ignoring these values can lead to skewed statistical results and negatively impact the performance of machine learning models.

Outliers can be caused by:

- Data entry errors: For example, entering an age of 200 instead of 20.

- Measurement errors: A faulty sensor can produce unusual values.

- Real but rare events: For example, a large purchase during a sales event.

It is important to distinguish between an outlier that is an error and a real but rare event. From there, the solutions to handle them will be different.

Common methods to identify outliers include:

Visualization method:

- Box Plot: This is a very effective tool to identify outliers. Any data point that falls outside the “whiskers” of the box plot is considered an outlier.

- Scatter Plot: Helps detect points that are far from the main data cluster in the relationship between two variables.

Statistical method:

- Interquartile Range – IQR: A common rule is to consider values that fall outside the range [Q1−1.5×IQR, Q3+1.5×IQR] as outliers. (Where Q1 is the first quartile, Q3 is the third quartile).

- Z-score: Calculates how many standard deviations a data point is away from the mean. Points with a large Z-score (usually > 3 or < -3) can be considered outliers.

Once outliers have been identified, there are a few options for appropriate management:

- Removal: If you are certain that the outlier is an error and cannot be corrected, remove it. However, as with dealing with missing data, be careful not to lose important information.

- Correction: If possible, try to find the cause of the error and correct the value. For example, go back to the original data source to check if the user is entering an error.

- Transformation: Applying mathematical transformations such as logarithms can reduce the impact of extremely large outliers that affect the entire data set.

- Robust Models: Some data analysis algorithms (such as using the median instead of the mean, or robust regression models) are less susceptible to outliers. In this case, you can keep those values.

Step 7. Build A Sustainable Data Quality Strategy

Data cleaning should not be a one-off project. New data flows in continuously, and quality issues will re-emerge without ongoing governance. A sustainable strategy includes:

- Define data quality standards and rules: Organizations need to clearly define what “high-quality data” means to them. This includes establishing specific standards for each of the important data quality attributes: completeness, uniqueness, timeliness, validity, accuracy, and consistency.

- Establishing a data governance process: This is defining ownership and responsibility for data. It is necessary to clearly specify who the data owner is for different data sets. These people are ultimately responsible for the quality of that data. There should be “data stewards” who are responsible for monitoring and maintaining data quality on a day-to-day basis.

- Integrating data quality into the data lifecycle: Instead of cleaning data at the end of the process, apply the principle of “prevention is better than cure”. Implement quality checks at the point of data entry. For example, web forms should have validation rules to prevent users from entering invalid data.

- Education Step and employee awareness: Every employee who touches data, from data entry clerks to data analysts, needs to be educated on the importance of data quality and their role in maintaining it. Create a data-centric culture where everyone understands that high-quality data is the foundation for smart business decisions.

- Evaluate and continuously improve: Understanding that your data quality strategy is not static. Standards, rules, and processes need to be reviewed regularly to ensure they remain relevant to changing business needs.

>>> See more:

- Data Validation & Verification: Differences With Clear Examples

- 15 Best Data Labeling Service Providers In 2026

- Top 10 Best Data Entry Outsourcing Companies in USA 2026

Tools Used For Data Cleansing & Data Cleaning

Selecting the right tool for data cleaning depends on the scale, complexity, and technical expertise of an organization’s data team. Below is an overview of the most widely used categories:

- OpenRefine: This is a popular open-source tool in the data analysis community. OpenRefine has an intuitive interface that makes it easy for users to work with data, identifying problems such as structural errors, inconsistencies, and duplicate data.

- Python Library: Python is a national data processing tool. Therefore, the Python library is also a popular tool in data processing and applications.

- Pandas: This tool supports the flexible DataFrame data structure and a series of functions to support data manipulation, handling missing values (.fillna(), .dropna()), removing duplicates (.drop_duplicates()), normalizing data types, and applying custom functions.

- NumPy: This is a commonly used tool for processing data in large, multidimensional arrays and matrices, along with a set of mathematical functions to perform calculations efficiently, which is very useful in identifying and handling outliers.

- R language: Similar to Python, R is a widely used programming language as well as a data statistics environment. Some features, such as dplyr, tidyr, and data.table are the main features commonly used for data cleaning.

- Commercial ETL Tools: Platforms like Talend, Informatica, and Microsoft SQL Server Integration Services (SSIS) always support users with ETL solutions for data processing. SSIS will apply many transformations and cleaning rules, then load the cleaned data into the data warehouse.

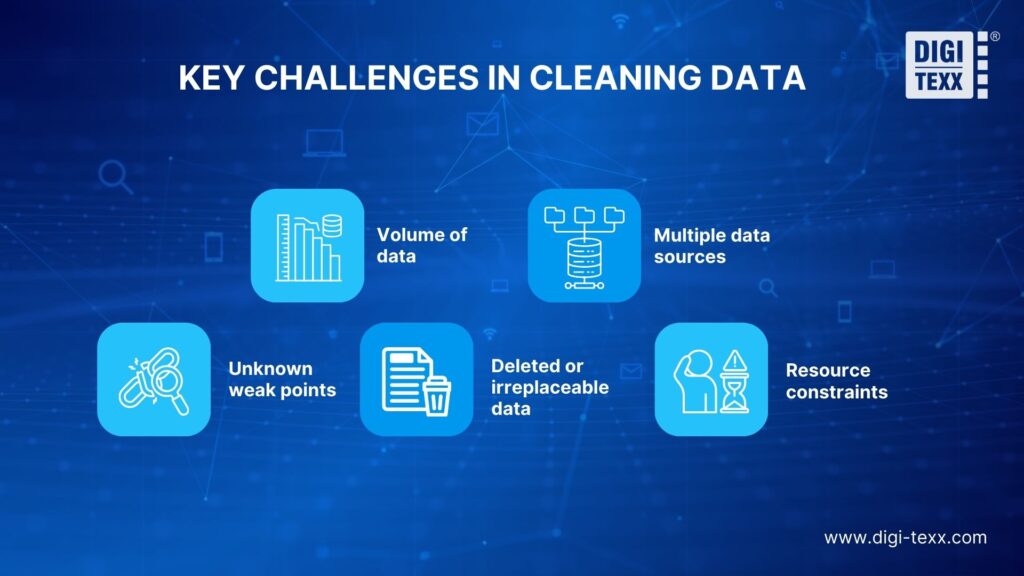

Key Challenges In Cleaning Data

Despite its importance, data cleaning is far from straightforward. Organizations frequently encounter a range of obstacles that make it time-consuming, resource-intensive, and complex:

- Volume of data: The sheer amount of data that needs to be cleaned can be overwhelming. Large datasets require significant time and resources to clean thoroughly, and as data volumes continue to grow exponentially, this challenge only intensifies.

- Multiple data sources: Many businesses collect data from a multitude of sources, such as CRM systems, third-party providers, e-commerce platforms, IoT sensors, and more. Each source may follow different structures, formats, and quality standards, making it difficult to apply uniform cleaning techniques across all datasets.

- Unknown weak points: Your data may contain errors without you knowing how or where they occurred in the data pipeline. Hidden errors that are not immediately apparent can compromise the integrity of analysis and remain undetected until significant damage is done.

- Deleted or irreplaceable data: Information needed to fill data gaps may have been deleted from the data warehouse and cannot be recovered. This makes imputation impossible for certain fields and forces analysts to either remove affected records or proceed with incomplete data.

- Resource constraints: Cleaning data is not just technically demanding, it is also costly in terms of human time and computational resources. Organizations without dedicated data engineering teams often struggle to perform cleaning at the frequency and depth their data quality requires.

>>>> Explore more:

- Best Real Estate Image Processing Services 2026: Top 15 Providers

- Healthcare Back-Office Support Services For Efficient Operations

- Top 10 Outsourced AI Training Data Companies in 2026

FAQs About Cleaning Data

What Is The Difference Between Cleaning And Cleansing Data?

Data cleaning typically focuses on automatically correcting common issues in a dataset, such as typographical mistakes, duplicate records, or missing values. Data cleansing, on the other hand, involves a more thorough process that aims to verify the accuracy, consistency, and completeness of the data, often including manual review or validation.

In simple terms, cleaning data removes obvious problems, while cleansing data ensures the data is trustworthy and ready for reliable analysis. When used together, these processes help transform raw data into high-quality information that organizations can confidently rely on.

What Happens If Data Is Not Cleaned?

The consequences of failing to clean data can be significant and wide-ranging:

- Poor decision-making: Analyses based on dirty data lead to false conclusions and misguided business strategies. A sales report inflated by duplicate entries, for example, could cause leadership to make overconfident growth projections.

- Wasted marketing and sales effort: A CRM database filled with incorrect or outdated customer information leads sales teams to target the wrong prospects, wasting time, budget, and opportunity.

- Reduced machine learning model accuracy: AI and machine learning models trained on poor-quality data will produce unreliable predictions, potentially causing serious harm in high-stakes applications such as healthcare or finance.

- Operational inefficiencies: Inventory shortages, delivery errors, billing mistakes, and other costly operational problems can all result from relying on inaccurate data in business processes.

- Compliance risk: In regulated industries, relying on incorrect or incomplete data can result in legal penalties and compliance failures.

Mastering cleaning data is no longer an option but a mandatory requirement for any organization that wants to fully exploit the potential of data. Through 8 essential steps—from removing duplicate data, fixing structural errors, handling missing data, standardizing formats, identifying accurate data validation, managing outliers, to using the right tools and building a solid data quality strategy—businesses can turn raw, messy data into a clean, consistent, and trustworthy source of information. If you are looking for a professional partner to help you optimize and clean your data sources, contact DIGI-TEXX today for a consultation on leading data cleaning and processing solutions.

If you have any questions or would like expert advice on data analytics services, please feel free to contact us using the information below.

DIGI-TEXX Contact Information:

🌐 Website: https://digi-texx.com/

📞 Hotline: +84 28 3715 5325

✉️ Email: [email protected]

🏢 Address:

- Headquarters: Anna Building, QTSC, Trung My Tay Ward

- Office 1: German House, 33 Le Duan, Saigon Ward

- Office 2: DIGI-TEXX Building, 477-479 An Duong Vuong, Binh Phu Ward

- Office 3: Innovation Solution Center, ISC Hau Giang, 198 19 Thang 8 street, Vi Tan Ward

Reference:

- Pew Research Center. (n.d.). Methods and data quality. https://www.pewresearch.org/methods/

- The Carpentries. (n.d.). Data cleaning with OpenRefine. https://datacarpentry.org/OpenRefine-ecology-lesson/