Data parsing software automates the process of converting raw, unorganized data into a structured format that can be easily analyzed and integrated with business intelligence tools. In this article, DIGI-TEXX will help you explore all the essential information about data parsing. Specifically, you’ll learn what data parsing software is, its importance, and the most effective methods to approach it.

>>> See more:

- Top 10 Data Processing Software For Business 2026 – Best Tool Reviewed

- Top 10 Data Cleansing Companies for Businesses in 2025

What Is Data Parsing?

Data parsing involves extracting pertinent information from unstructured data sources and converting it into a structured format that is easier to analyze. A data parser is a software application or tool designed to automate this process.

A data parsing software is a tool that reads and interprets data in a specific format, extracts relevant information from the processed data, and transforms it into a more usable format. There are many data parsers available, including Beautiful Soup, lxml, and csvkit. These diverse data extraction tools are useful for quickly and efficiently analyzing large volumes of data.

>>> See more:

- What are Data Processing Services? Key Types and Benefits

- Top 10 Data Entry Outsourcing Companies to Hire in 2026

Best Data Parsing Software Solutions

Below are the best data parsing software solutions available today, selected based on ease of use, automation capabilities, data accuracy, and scalability. Each tool serves different business needs, from no-code web scraping to advanced data extraction at scale.

ParseHub – Best for Web Scraping

ParseHub is a highly efficient web scraping tool that enables businesses to extract structured data from websites without requiring programming knowledge. It features an intuitive point-and-click interface, allowing users to select specific data elements from web pages effortlessly.

Key Features:

- Supports multiple data formats, including CSV, JSON, and Excel.

- Uses advanced machine learning to detect and extract data even from dynamic and JavaScript-heavy websites.

- Provides cloud-based automation, enabling scheduled scraping tasks without the need for manual execution.

- Offers API integration, allowing businesses to connect extracted data directly to their systems.

Ideal For:

- Businesses that need to extract data from multiple web sources for market research, price monitoring, and lead generation.

- Users without technical expertise who require a no-code solution for data extraction.

Talend – Best for Enterprise Data Integration Tool

Talend is a robust data integration and parsing tool designed to help enterprises process and manage large-scale data operations efficiently. It supports both cloud-based and on-premises deployment, ensuring smooth data flow across various business applications.

Key Features:

- Supports multiple data sources, including SQL databases, cloud platforms, and APIs.

- Provides data cleansing and transformation tools to enhance data accuracy and consistency.

- Offers built-in connectors for seamless integration with popular BI tools such as Tableau, Power BI, and Snowflake.

- Features real-time data processing capabilities for immediate business insights.

Ideal For:

- Enterprises require comprehensive data integration solutions to manage complex workflows.

- Organizations that require secure and scalable data handling across multiple departments.

Diffbot – Best AI-Powered Data Extraction

Diffbot stands out as a leading AI-powered data extraction tool, specializing in transforming unstructured web content into structured and actionable insights. Using advanced machine learning and computer vision, Diffbot can analyze and categorize vast amounts of online data with high accuracy.

Key Features:

- Uses natural language processing (NLP) and computer vision to extract meaningful information from articles, blogs, and e-commerce sites.

- Automatically classifies and structures data into predefined categories such as products, organizations, and people.

- Supports API-based data extraction, allowing seamless integration into enterprise systems.

- Continuously improves parsing accuracy using AI-driven learning models.

Ideal For:

- Businesses involved in market intelligence, competitor analysis, and web monitoring.

- Organizations that require automated large-scale data extraction with minimal human intervention.

>>> See more:

- What Is Business Process Outsourcing (BPO)? Definition & Benefits

- Business Process Automation Solutions: Benefits, Example & Service Company

Octoparse – Best Data Parsing Software for Non-Technical Users

Octoparse is a user-friendly web scraping and data parsing software tool that allows individuals and businesses to extract data without any programming skills. It provides a drag-and-drop interface that makes data extraction quick and easy for non-technical users.

Key Features:

- No-code web scraping with a visual workflow builder.

- Supports cloud-based data scraping, allowing automated extraction even when the user is offline.

- Handles pagination, login authentication, and captcha-solving for more advanced scraping tasks.

- Exports parsed data into various formats such as Excel, JSON, and CSV.

Ideal For:

- Small businesses and startups require a simple data extraction tool without technical complexities.

- Marketers, researchers, and e-commerce businesses need quick access to online data without hiring developers.

Scrapy – Best Open-Source Solution

Scrapy is an open-source web scraping framework written in Python, designed for developers and businesses looking for a customizable and scalable data extraction solution. It provides full control over data parsing workflows and can be integrated with other Python-based data processing libraries.

Key Features:

- Highly customizable, allowing users to define specific parsing rules and data extraction patterns.

- Built-in support for handling complex web structures, including AJAX and dynamic content.

- Integrates with data storage systems like MongoDB, MySQL, and Elasticsearch.

- Offers robust performance and scalability, making it suitable for large-scale web scraping projects.

Ideal For:

- Developers and data engineers who require full control over web scraping projects.

- Companies that need a free and open-source tool for large-scale, automated data extraction.

How to Choose the Right Data Parsing Tool?

Select a suitable data parsing tool based on the data format, considering performance, accuracy, compatibility, and ease of use.

- Test the Parser: Test the parser on various data types to ensure accurate extraction and identify errors or performance issues.

- Handle Errors Gracefully: Implement error handling to manage inconsistencies, corruption, or incorrect formats, using exception handling to log errors and provide user feedback.

- Optimize Performance: Enhance efficiency for large data volumes through caching, multithreading, and minimizing I/O operations.

- Maintain Flexibility: Design the parser to adapt to changing data formats and business needs with modular designs and configurable files.

- Document the Process: Keep thorough documentation of the parsing process, including data formats, tools used, testing results, error handling strategies, and performance optimizations.

>>> See more:

- Healthcare BPO Services – Cost Optimization & Improve Care 2026

- What are the 6 steps of the data analysis process?

- How to Automate Documentation in 2026: Processes, Tools & Examples

Why Is Data Parsing Software Important?

Dealing With Large Amounts of Data

Parsing data consumes time and system resources, which can lead to performance issues, particularly with Big Data. For this reason, you may need to parallelize your data processes to parse multiple input documents at once and save time. However, this approach increases resource consumption and overall complexity. Therefore, parsing large datasets is challenging and necessitates advanced tools.

Handling Errors and Inconsistencies

The input for data parsing usually consists of raw, unstructured, or semi-structured data, which often contains errors and inconsistencies. HTML documents frequently exhibit these issues, as modern browsers can render pages with syntax errors. Consequently, your HTML may have unclosed tags, invalid content according to W3C standards, or special HTML characters. To handle this data, an intelligent parsing system is required to address these challenges automatically.

Saving Time and Operational Costs

Data parsing software allows you to automate repetitive tasks, saving both time and effort. Besides, converting data into more accessible formats means that your team can understand the information more quickly and carry out their tasks with greater ease.

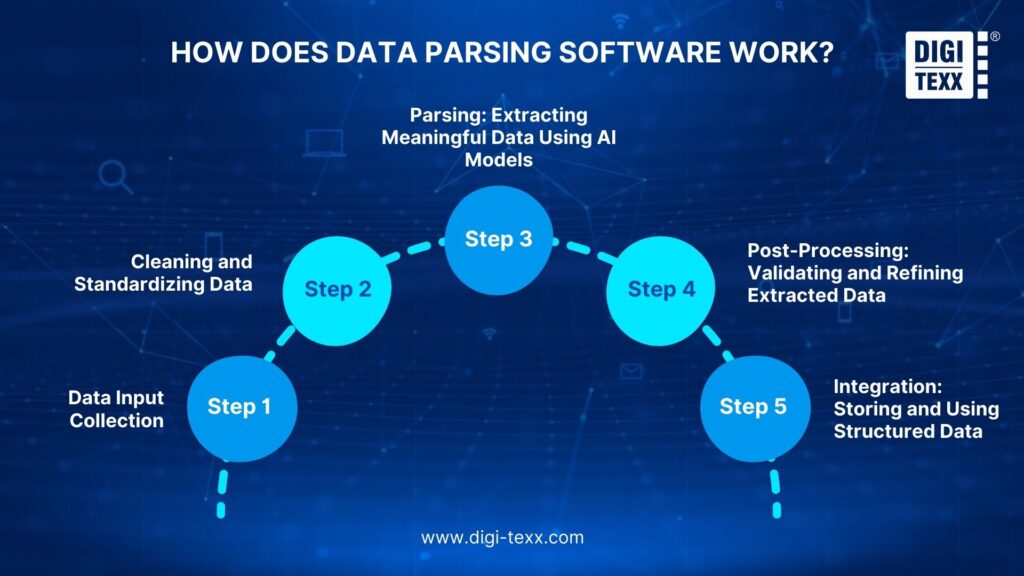

How Does Data Parsing Software Work?

Step 1: Data Input Collection

Data parsing software starts by gathering raw data from various sources, including:

- Text files (TXT, DOCX, PDFs)

- JSON (JavaScript Object Notation)

- XML (Extensible Markup Language)

- CSV (Comma-Separated Values)

- SQL databases

- API responses

Step 2: Cleaning and Standardizing Data

Before AI can begin extracting information, the data needs to be cleaned and structured. Preprocessing plays a crucial role in ensuring consistency and minimizing errors by eliminating noise and irrelevant data, correcting mistakes from OCR misreads, standardizing formats, and providing tokenization and segmentation for natural language processing (NLP) applications.

Step 3: Parsing: Extracting Meaningful Data Using AI Models

Once the data has been preprocessed, AI models analyze its structure to extract relevant information. This process differs depending on the type of data:

- Text Parsing: NLP techniques identify entities (such as names, addresses, and amounts), relationships, and key phrases within text-heavy data.

- Structured Data Parsing: AI extracts information from formats like tables, JSON, XML, or CSV files, ensuring accurate mapping of data fields.

- Pattern Recognition: Machine learning models recognize specific patterns in documents, such as invoice numbers and product descriptions.

Step 4: Post-Processing: Validating and Refining Extracted Data

Extracted data undergoes additional refinement to guarantee accuracy and consistency. It is validated against existing databases, discrepancies in financial transactions are flagged, and anomalies are identified. Furthermore, the data is enriched by supplementing parsed information with contextual data from external sources. This process ensures that the final dataset is error-free, well-structured, and ready for integration.

Step 5: Integration: Storing and Using Structured Data

Finally, the parsed data is formatted and integrated into business systems, including databases, Enterprise Resource Planning (ERP) systems, Customer Relationship Management (CRM) software, and accounting and financial tools.

The Future of Data Parsing Software

Key Advancements in AI-Driven Data Parsing

- Enhanced Accuracy: Machine learning algorithms continuously improve parsing accuracy by reducing errors in data extraction and categorization.

- Contextual Understanding: Natural Language Processing (NLP) allows software to understand context, improving semantic parsing and making data extraction more meaningful.

- Automated Data Categorization: AI-powered tools can classify and structure data based on industry-specific requirements, making it easier for businesses to process and analyze information.

- Self-Learning Capabilities: AI models can train themselves on new datasets, ensuring adaptability to changing data formats without constant manual adjustments.

As AI technology continues to advance, businesses will benefit from smarter, faster, and more autonomous data parsing solutions, reducing human involvement while improving data quality.

Real-Time Data Processing Advancements

Businesses require real-time insights to make informed decisions. Traditional data parsing solutions often involve batch processing, where data is collected and processed in intervals. However, real-time data parsing software is evolving to support instant data extraction and processing, allowing businesses to respond to trends, customer behaviors, and market changes as they happen.

- Instant Decision-Making: Businesses can analyze real-time data to adjust strategies on the go, improving responsiveness and agility.

- Live Data Streams: Integration with APIs, IoT devices, and cloud-based systems allows companies to capture and process data continuously.

- Fraud Detection and Security Monitoring: Financial institutions and cybersecurity firms can use real-time parsing to detect anomalies and prevent fraudulent transactions.

- Optimized Customer Experiences: E-commerce platforms and digital marketers can personalize customer interactions based on real-time behavioral insights.

With the rise of 5G and edge computing, real-time data processing will become even more efficient, enabling businesses to handle large-scale data parsing operations with minimal latency.

Cloud-Based Data Parsing Solutions

As businesses expand globally and adopt remote work models, cloud-based data parsing solutions are becoming essential. Traditional on-premises data parsing software often requires substantial infrastructure, maintenance, and manual updates. Cloud-based parsing tools, on the other hand, offer scalability, flexibility, and seamless remote access, making data management more efficient and cost-effective.

- Scalability: Cloud solutions can handle increasing data volumes without requiring additional infrastructure investments.

- Remote Accessibility: Teams across multiple locations can access, parse, and analyze data from anywhere with an internet connection.

- Automatic Updates: Cloud-based tools receive regular updates, ensuring businesses always have access to the latest parsing algorithms and security features.

- Cost Efficiency: Eliminating the need for on-premises hardware reduces operational costs, making cloud-based parsing more budget-friendly.

- Seamless Integration: Cloud parsing solutions easily integrate with existing business applications, including CRM systems, ERP software, and BI tools.

>>> See more:

- Outsourcing Data Cleansing: What You Need to Know

- How Digitization Can Facilitate Historical Research?

Frequently Asked Questions About Data Parsing Software

What Is Parsing Software?

Parsing software is a tool that processes input data, usually text, and converts it into a structured format such as a parse tree or another hierarchical structure. This helps the software understand the data’s structure while ensuring the syntax is correct.

What Is The Best AI For Parsing Data?

Google Document AI is a smart choice. It offers several pre-trained models designed for specific document types. The platform can also be customized to process documents beyond its built-in models by further training an existing AI model. However, achieving high accuracy requires a large amount of training data.

By integrating the right data parsing software, businesses can optimize workflows and make informed, data-driven decisions. If you’re looking for a reliable solution, explore DIGI-TEXX’s data parsing services and take your data management to the next level!

>>> See more:

- Mastering Real-Time Data Scraping | Key to Instant Data Insights

- How Automated Invoice Processing Saves Time and Reduces Errors?

DIGI-TEXX Contact Information:

🌐 Website: https://digi-texx.com/

📞 Hotline: +84 28 3715 5325

✉️ Email: [email protected]

🏢 Address:

- Headquarters: Anna Building, QTSC, Trung My Tay Ward

- Office 1: German House, 33 Le Duan, Saigon Ward

- Office 2: DIGI-TEXX Building, 477-479 An Duong Vuong, Binh Phu Ward

- Office 3: Innovation Solution Center, ISC Hau Giang, 198 19 Thang 8 street, Vi Tan Ward