The digital ecosystem in 2026 presents unprecedented challenges for businesses managing online communities. Social Media Moderation Services have transformed from optional overhead into a strategic infrastructure that determines platform survival.

With over 5.6 billion active social media users globally generating massive content volumes, businesses without professional Social Media Moderation Services face existential risks to brand reputation, legal compliance, and user retention.

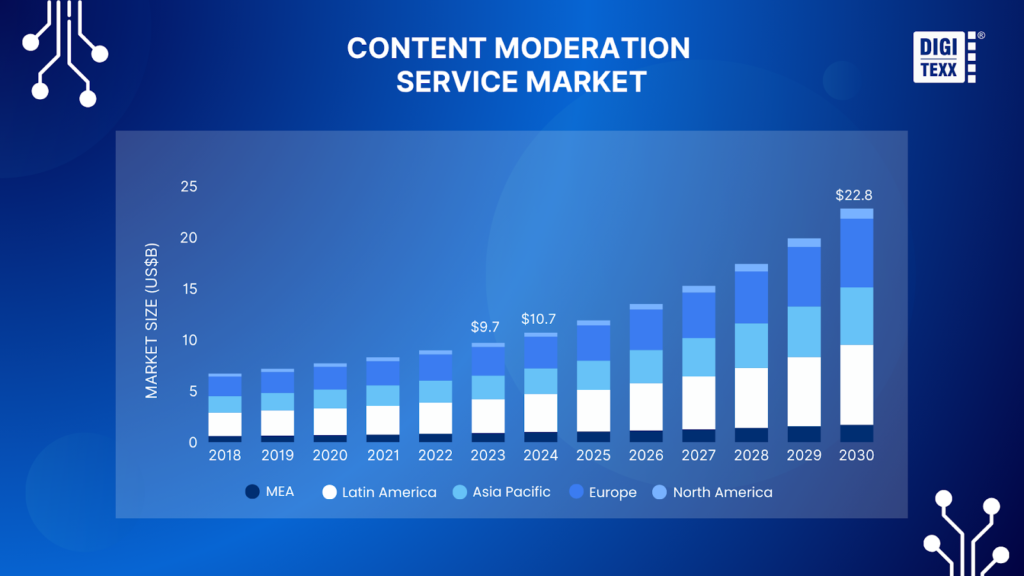

The global market reached $10.7 billion in 2024 and projects explosive growth to $22.8 billion by 2030, registering approximately 13.4 percent CAGR through this period[2].

What Are Social Media Moderation Services?

Social Media Moderation Services encompass comprehensive systems combining technology, human expertise, and strategic protocols to monitor, evaluate, and manage user-generated content across digital platforms.

These services enforce community standards concerning threats, incitement, graphic content, hate speech, sexual material, misinformation, bullying, harassment, and spam while balancing protection against censorship concerns.

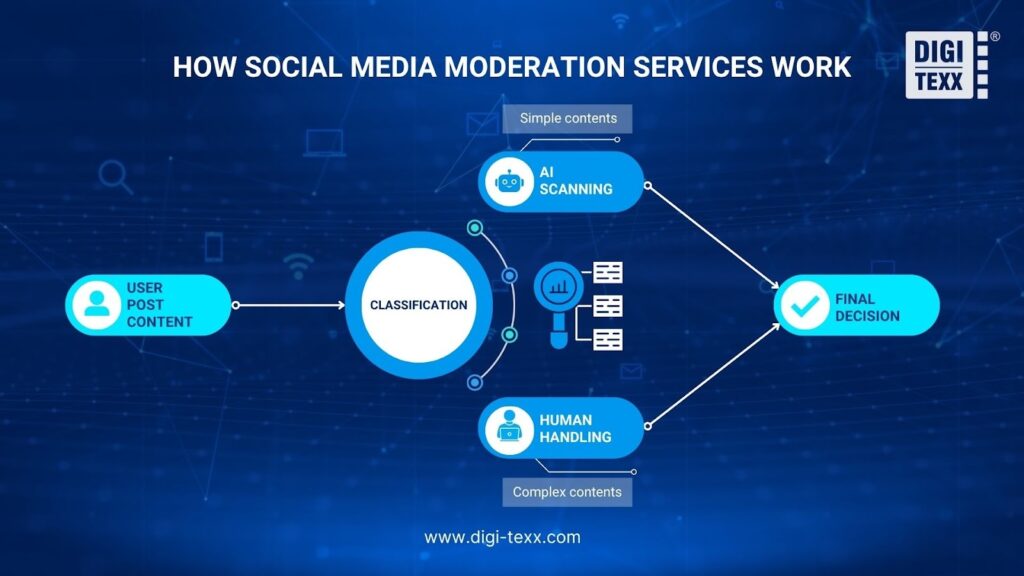

Professional moderation integrates multiple operational layers working simultaneously. Advanced artificial intelligence systems powered by natural language processing and computer vision analyze content in real-time, often making decisions in under one second for clear violations.

Human moderators then review ambiguous cases requiring judgment, applying cultural knowledge and contextual awareness that machines cannot replicate. Quality assurance teams continuously audit decisions to maintain consistency and accuracy.

Social Media and Communities platforms commanded 48.93% of total market revenue in 2024[1], reflecting the concentration of moderation needs within social spaces. YouTube alone sees over 500 hours of video uploaded every minute.

Meta reported removing more than 100 million fake accounts and over 21 million pieces of harmful content from Facebook and Instagram in India during May 2024, specifically demonstrating the industrial scale at which modern moderation operates[3].

Scholar Sarah T. Roberts, whose groundbreaking research defined commercial content moderation as a field, emphasizes in her work documented by the Library of Congress that moderation represents monitoring and vetting user-generated content to ensure compliance with legal and regulatory requirements, site guidelines, user agreements, and norms of taste and acceptability for specific platforms and cultural contexts.

This definition captures the multidimensional nature of modern moderation, encompassing legal, cultural, ethical, and business considerations simultaneously.

Key Features of Top Moderation Services

The most effective Social Media Moderation Services in 2026 incorporate distinguishing capabilities that separate enterprise-grade solutions from basic offerings.

Real-time content monitoring represents perhaps the most critical differentiator. Leading services utilize AI Moderation APIs capable of detecting and classifying content across more than 40 different harm categories in 30 languages.

Research from Statista shows that without proper video moderation, harmful, illegal, or offensive content can spread unchecked, damaging user trust and a platform’s reputation. Research also shows that exposure to violent or harmful content can reduce empathy and increase aggression, anger, and violent behavior.

Multilingual and multicultural capabilities distinguish premium services from basic providers.

A phrase considered acceptable in one culture might constitute a severe offense in another, requiring moderators with cultural intelligence rather than simple linguistic knowledge.

The human-AI hybrid approach combines automation efficiency with irreplaceable human judgment. Research from Harvard Law School on Algorithmic Content Moderation emphasizes that automated systems filter extreme content quickly without fatigue, but humans provide contextual understanding that machines cannot decode.

The gold standard in content moderation today involves AI handling initial screening while escalating complex cases to trained human moderators who apply judgment based on community standards and brand values.

Comprehensive reporting and transparency features enable businesses to track moderation effectiveness and demonstrate regulatory compliance.

How Do Social Media Moderation Services Work?

Understanding the operational mechanics of Social Media Moderation Services reveals the sophisticated infrastructure required to protect online communities at scale. Modern moderation operates through a systematic multi-step process:

Step 1: Content Submission and Initial Screening

The process begins when users post text, images, videos, or other media to social platforms. At this initial stage, basic automated filters perform preliminary screening, checking for prohibited terms or previously identified harmful content patterns through hash-matching technologies. This first line of defense catches the most obvious violations instantly.

Step 2: AI-Powered Content Analysis

Artificial intelligence systems then analyze submitted content using advanced technologies. Natural language processing examines text for toxic language, hate speech, and policy violations. Computer vision scans images and videos for graphic content, nudity, or violence. Sentiment analysis assesses tone and intent behind messages.

Step 3: Confidence Scoring and Automated Decision Making

When AI systems analyze content, they assign confidence scores, typically ranging from 0 to 100 percent, indicating how certain the system is that the content violates policies. Think of this as the AI’s level of certainty about whether something is harmful or not. For example, a post containing explicit hate speech might receive a 95 percent confidence score for policy violation, while a borderline sarcastic comment might only score 40 percent.

Based on these scores, the system takes different actions. High-confidence cases, usually scoring above 85 to 90 percent, show clear and obvious violations like explicit violence, pornography, or direct hate speech. These are automatically removed or immediately flagged for action, ensuring rapid response to obvious threats without waiting for human review.

Medium-confidence cases, typically scoring between 50 and 85 percent, represent ambiguous content where the AI detects potential issues but cannot be certain. These are escalated to human moderators for review, recognizing the need for human judgment on nuanced content. For instance, a political comment that uses strong language might fall into this category, requiring a moderator to determine if it crosses the line into incitement or remains within acceptable discourse.

Low-confidence cases, scoring below 50 percent, indicate content where the AI detects minimal or no signs of violation. These are typically allowed to remain visible but may be monitored passively through user reports or if patterns emerge suggesting coordinated problematic behavior. This threshold-based approach prevents over-moderation of legitimate content while maintaining system vigilance against emerging threats.

Step 4: Human Moderator Review

Human moderators review flagged content within a broader context that automated systems miss. As noted in research from the Library of Congress on Content Moderation Practices, content moderators can identify nuanced violations by taking into account statement context that automated systems cannot fully comprehend. Moderators evaluate whether threatening statements represent genuine danger or hyperbolic frustration, whether sexual content constitutes harassment or consensual adult interaction, and whether political content crosses from legitimate discourse into incitement. This human judgment layer proves essential for maintaining fairness and accuracy.

Step 5: Quality Assurance and Continuous Improvement

Quality assurance represents an essential component of effective moderation. The entire workflow generates data feeding back into AI models, improving accuracy over time through continuous learning. When human moderators override AI decisions, those corrections help retrain algorithms to make better judgments in similar future cases.

Why Your Business Needs Social Media Moderation

The necessity of professional Social Media Moderation Services extends far beyond simply keeping comment sections clean. As digital interactions increasingly define public perception and customer relationships, comprehensive moderation has become a strategic imperative with measurable impacts on business success.

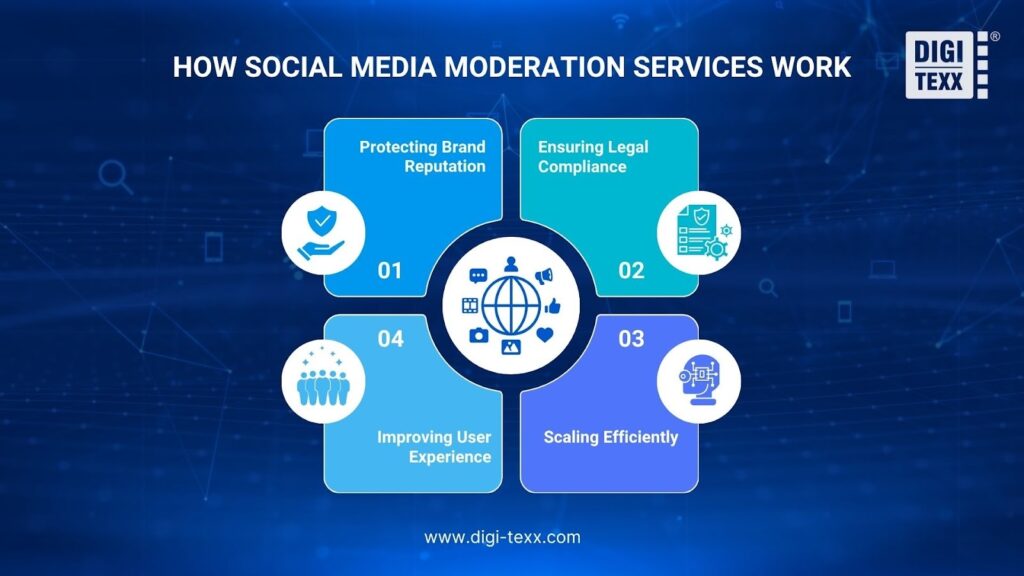

Protecting Brand Reputation

Brand reputation represents one of your most valuable intangible assets, yet it can be severely damaged in hours by unmoderated content.

According to Nielsen on Brand Reputation Protection, 92 percent of consumers believe recommendations from friends and family over other advertising kinds[9], making word-of-mouth incredibly powerful in shaping brand perception.

When negative comments, trolling, spam, or inappropriate content appear on your social media channels, it creates negative associations with your business in potential customers’ minds.

Professional Social Media Moderation Services protect against these scenarios by catching problems before they escalate. Services like BrandBastion have helped clients increase positive sentiment by 116 percent through proactive moderation, creating spaces where genuine engagement can flourish.

Companies using professional moderation report saving over 450 hours monthly in moderation and response efforts while managing approximately 13,000 comments per month[10].

Ensuring Legal Compliance

Navigating the complex web of content moderation regulations has become a critical business requirement. The European Union Digital Services Act exemplifies stringent requirements now facing businesses.

Platforms must provide clear reasons when removing or altering user content, maintain transparent content moderation processes, and conduct comprehensive risk assessments. Companies failing to comply face penalties of up to six percent of their global annual turnover.

For a practical context, a platform earning $1 billion annually could face fines of up to $60 million for DSA violations. The DSA also imposes strict timelines, typically requiring illegal content removal within 24 hours of notification.

The General Data Protection Regulation adds another complexity layer, regulating how companies handle user data during content moderation processes.

Beyond Europe, regulations continue proliferating globally. The United Kingdom Online Safety Act requires platforms to implement age verification measures and maintain age-appropriate design requirements. Australia’s Online Safety Act 2021 empowers the eSafety Commissioner to issue removal notices for harmful content with fines for non-compliance.

Professional Social Media Moderation Services help businesses meet these obligations through specialized expertise. generate required transparency reports, and provide documentation demonstrating adherence to legal standards.

Scaling Efficiently

The volume challenge in Social Media Moderation Services represents one of the most daunting operational realities facing businesses. Approximately 71 percent of removed content was handled by automated systems while 29 percent required manual moderation, illustrating the essential role of technology in managing volume[2].

Professional Social Media Moderation Services solve this scaling challenge through intelligent orchestration of automated and human resources. AI systems can process millions of content pieces in real-time, instantly flagging clear violations while allowing acceptable content to flow freely.

The economics of scaling favor professional services. Building an in-house moderation team typically takes six to twelve months and costs hundreds of thousands of dollars in technology development, training programs, and staffing.

Partnering with established moderation services allows businesses to deploy proven systems in under 30 days.

Improving User Experience

User experience stands as perhaps the most compelling business case for professional Social Media Moderation Services, directly impacting engagement, retention, and revenue.

Research demonstrates that by filtering out irrelevant or low-quality content, moderation enhances overall experience and creates environments where genuine community can develop.

Platforms with strong moderation report user session lengths 40 to 60 percent longer than poorly moderated competitors.

For e-commerce and marketplace platforms, moderation directly affects conversion rates and transaction volume. According to MarketingLTB analysis, 78 percent of individuals rely on social media as their primary information source when researching brands[11].

Platforms known for maintaining high-quality, trustworthy content enjoy competitive advantages in attracting and retaining both buyers and sellers.

Types of Content That Require Moderation

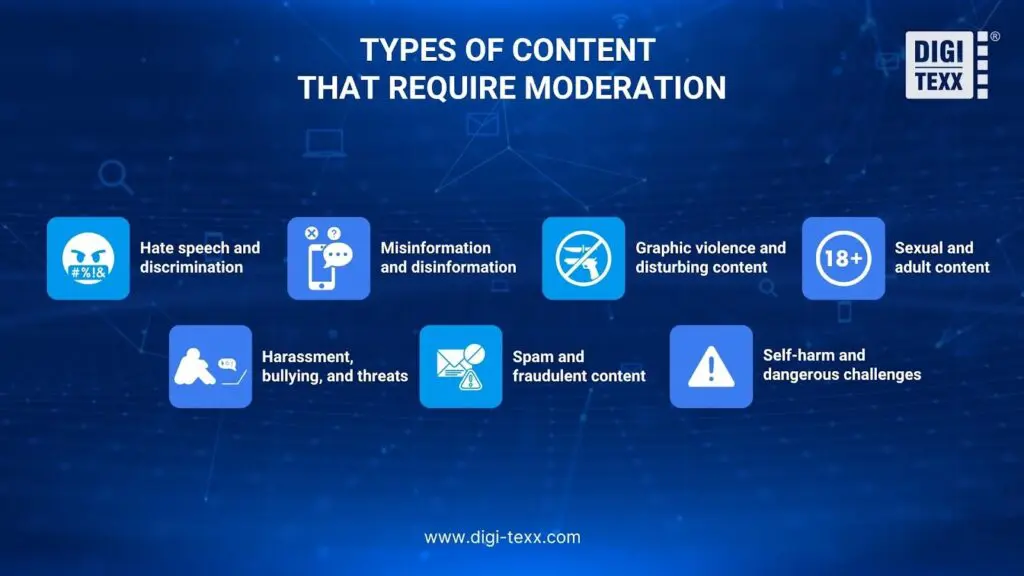

Modern social media platforms must monitor and manage an extensive range of potentially harmful content types. Each category presents unique detection and evaluation challenges requiring specialized approaches:

- Hate speech and discrimination: This represents one of the most challenging categories to moderate effectively, attacking individuals or groups based on race, religion, gender, sexual orientation, disability, or other protected characteristics.

The challenge lies in distinguishing between legitimate criticism, satire, or reclaimed language versus genuine attacks intended to harm vulnerable groups.

For example, minority group members may use slurs toward their own community in specific contexts, while the same terms from outsiders constitute clear harassment.

- Misinformation and disinformation: This content has exploded as a moderation priority, particularly following the COVID-19 pandemic, where conspiracy theories and false health information spread rapidly, causing real-world harm.

Misinformation often elicits more anger than trustworthy news, leading users to share it without verification. This emotional engagement makes misinformation particularly compelling and dangerous, posing risks to democratic integrity, public health, and consumer safety.

Effective moderation must distinguish between genuine misinformation, satire, parody, differing opinions on contested topics, and evolving information on breaking news where facts are still emerging.

- Graphic violence and disturbing content: This category requires moderation to protect users from traumatic exposure while respecting legitimate informational and educational purposes.

Content includes depictions of injuries, deaths, terrorism, torture, mass violence, and other acts causing severe harm.

The complexity emerges in determining context where newsworthy documentation of violence, educational content about historical atrocities, or artistic expression may serve legitimate purposes despite their graphic nature.

Moderators must evaluate whether content serves public interest or merely exploits shock value for engagement.

- Sexual and adult content: Moderation extends from explicit pornography to suggestive material, revenge porn, child sexual abuse material, and sexual exploitation.

Platforms must enforce age restrictions, prevent non-consensual intimate imagery, and protect minors from inappropriate exposure while respecting adult user rights to consensual sexual expression in appropriate contexts.

Video content moderation presents unique challenges as the dynamic nature of video, which often includes both visual and audio elements, presents complex requirements in identifying harmful or inappropriate material.

- Harassment, bullying, and threats: This content encompasses direct attacks on individuals through intimidation, sustained abuse, or credible threats of violence.

Such content can be particularly harmful when targeting vulnerable individuals like children or members of marginalized communities facing coordinated campaigns.

Detection requires understanding relationship dynamics, patterns of behavior over time, and distinguishing between genuine threats versus hyperbolic expressions of frustration.

A single angry comment may not constitute harassment, but dozens of similar comments from coordinated accounts clearly represent targeted abuse.

- Spam and fraudulent content: This category wastes user time and can expose them to scams, phishing attempts, malware distribution, and financial fraud.

Content includes unsolicited advertising, bot-generated messages, fake accounts, counterfeit product listings, pyramid schemes, and attempts to steal personal information.

Modern spam increasingly uses sophisticated language and images that evade simple keyword filters, requiring advanced detection methods.

- Self-harm and dangerous challenges: This represents particularly sensitive content promoting eating disorders, suicide, self-injury, or dangerous viral challenges that can lead to physical harm or death.

Such content is especially harmful when targeting vulnerable audiences like teenagers struggling with mental health issues.

Moderation must remove promotion of harmful behaviors while allowing supportive discussions and resources for people struggling with these issues.

The challenge lies in distinguishing between content that glorifies self-harm versus content seeking help or providing support.

How Do Users Act On Objectionable Content?

User reporting represents a critical component of effective Social Media Moderation Services, serving as an essential feedback mechanism that complements automated detection systems.

While AI can proactively scan content, user reports help identify context-specific violations, emerging threats, and nuanced problems that algorithms might miss. Understanding both user and platform responsibilities in the reporting process creates more effective moderation ecosystems.

Report Objectionable Content

The standard reporting process typically begins when users encounter concerning content and locate the “Report” or “Flag” button, usually positioned near the post, comment, or profile.

This action opens a reporting interface where users select the violation type from predefined categories such as hate speech, harassment, spam, violence, misinformation, or other policy violations. Platforms design these categories to align with their community guidelines, making it easier for users to identify the specific rule being violated.

After selecting a category, users should provide additional context explaining why the content violates policies. This contextual information proves invaluable for human moderators reviewing the report, as it provides a perspective that the content itself might not reveal.

For instance, a seemingly innocent comment might constitute harassment when viewed within the history of interactions between two users. Users can often upload screenshots, provide links to related content, or explain patterns of behavior that support their report.

Users should report objectionable content promptly rather than waiting or assuming others will report it.

According to the Digital Services Act requirements, platforms must make reporting mechanisms easily accessible and clearly visible to all users, ensuring anyone can contribute to community safety. Multiple reports of the same content help platforms prioritize review and identify patterns of problematic behavior.

For those wanting to understand platform-specific reporting procedures in detail, examining established systems provides valuable insights. For example, YouTube‘s content reporting system guidelines offer a comprehensive framework to see how major platforms structure their user reporting workflows.

Block Accounts

Blocking represents the most definitive action users can take to protect themselves from specific accounts. When users block someone, that person can no longer view their profile, send them messages, tag them in posts, or interact with their content in any way.

The blocked user typically does not receive notification of being blocked, though they may eventually notice their inability to access the blocking user’s content.

Blocking proves particularly effective for handling persistent harassment, unwanted attention, or ex-relationships where continued contact causes distress. Unlike reporting, which addresses policy violations, blocking serves as a personal boundary action, allowing users to curate their own online experience regardless of whether the other person violated platform rules.

Hide or Restrict

Hiding offers a softer alternative to blocking when users want to reduce exposure to someone’s content without completely severing the connection. Hidden accounts can still follow and interact with the user, but their posts, comments, and stories no longer appear in the user’s feed.

This proves useful for situations where blocking would create social awkwardness, such as hiding a coworker who over-shares or a relative with problematic political posts, while maintaining the appearance of connection.

Some platforms also offer “restrict” features that limit an account’s ability to interact without fully blocking it. For example, restricted accounts might have their comments hidden from public view or their messages moved to a request folder rather than the main inbox.

Leave the Platform or Community

When problematic content becomes pervasive or platforms fail to address safety concerns adequately, users may choose to leave specific communities or the platform entirely.

While this represents the most drastic option, research on online community psychology demonstrates that users frequently exit platforms where they feel unsafe, disrespected, or unwelcome.

Platforms that fail to maintain effective moderation often experience user attrition, particularly among women and minority users who are often the targets of intense harassment. Before leaving permanently, users might temporarily deactivate accounts to assess whether stepping away improves their well-being, providing clarity on whether the platform adds value to their life.

Speak Up and Counter-Speech

In some situations, users choose to directly respond to objectionable content through counter-speech, challenging misinformation, correcting false claims, or defending targets of harassment.

This approach can be effective when dealing with misinformation where providing accurate information benefits the broader community, or when supporting someone facing coordinated harassment. However, users should carefully consider whether engagement might escalate situations or expose them to additional harassment.

Counter-speech works best when users have community support, feel emotionally prepared for potential backlash, and believe their response might genuinely educate others or change minds. For situations involving direct threats, severe harassment, or illegal content, reporting remains the safer and more appropriate response than direct engagement.

While specific details about outcomes may be limited for privacy reasons, users typically receive notifications when actions are taken. If users disagree with how their report was handled, many platforms offer appeal processes or additional reporting options for persistent violations.

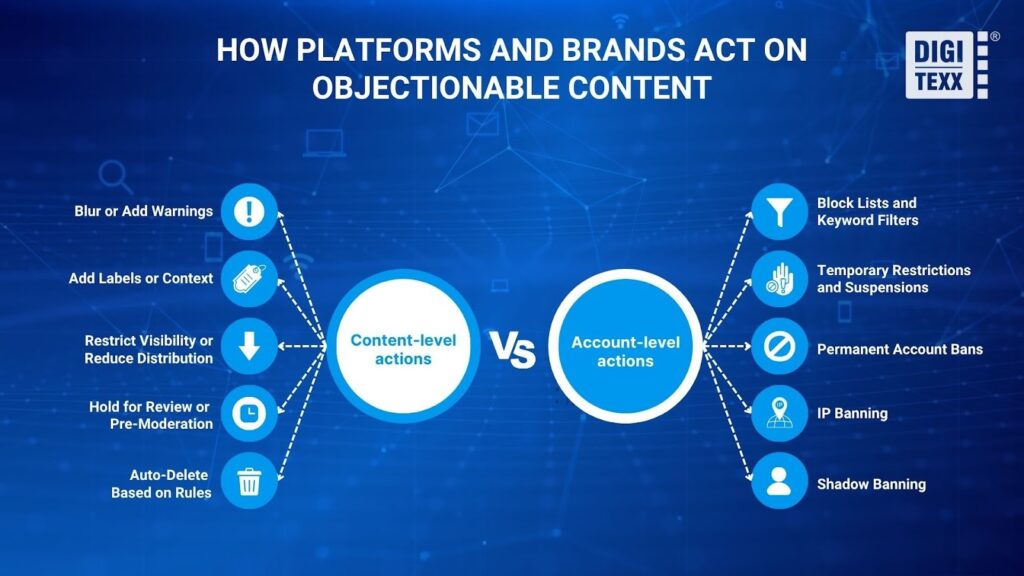

How Do Platforms And Brands Act On Objectionable Content?

To balance user empowerment with operational scaling, brands and platforms must prioritize the development of robust reporting infrastructures and the execution of nuanced moderation strategies. Modern Social Media Moderation Services provide platforms with a sophisticated toolkit of graduated responses, allowing nuanced handling of policy violations rather than relying solely on content removal.

Content-Level Actions

Blur or Add Warnings

Blurring represents a middle-ground approach for sensitive content that does not violate policies outright but may disturb some viewers. Platforms apply blur filters to potentially disturbing images, requiring users to click through a warning before viewing.

This technique commonly handles graphic violence in news contexts, artistic nudity, or medical content serving educational purposes. The warning overlay typically explains why the content was blurred and what users should expect if they choose to view it.

Add Labels or Context

Content labeling provides additional information without removing posts, particularly useful for addressing misinformation. Platforms attach labels to posts containing disputed claims, linking to fact-checking resources or authoritative information.

For example, during the COVID-19 pandemic, platforms labeled posts about vaccines with links to health authority guidance. Election-related content often receives labels explaining voting procedures or indicating that results remain uncounted.

Restrict Visibility or Reduce Distribution

Platforms can limit how widely content spreads without removing it entirely. Restricted content might remain visible on the original poster’s profile but not appear in recommendations, search results, or trending sections.

The algorithm deprioritizes this content, dramatically reducing its reach while technically allowing it to remain accessible. This proves effective for borderline content that doesn’t clearly violate policies but demonstrates low quality, sensationalism, or misleading framing. By reducing distribution, platforms limit harm while avoiding definitive judgments on whether content crosses policy lines.

Hold for Review or Pre-Moderation

Holding content for review prevents publication until moderators approve it, essentially implementing pre-moderation for specific accounts, keywords, or content types.

New accounts might have initial posts held for review to prevent spam account abuse. Users with histories of violations might face stricter scrutiny where future posts require approval.

Certain sensitive keywords automatically trigger holds, ensuring human review before publication. While this creates publishing delays that users dislike, it prevents harm from reaching audiences in high-risk situations.

Auto-Delete Based on Rules

Automated deletion removes content matching specific criteria without human review, appropriate for clear-cut violations where context rarely matters.

Child sexual abuse material detected through hash matching receives immediate automatic deletion. Spam containing known phishing links gets auto-deleted to protect users. Content from previously banned accounts attempting to evade restrictions through new profiles faces automatic removal.

Account-Level Actions (User)

Block Lists and Keyword Filters

Platforms maintain block lists preventing specific accounts, domains, or keywords from appearing on their services.

Individual users can create personal block lists as described earlier, but platforms also maintain master block lists of known bad actors, spam domains, and prohibited terms. These lists prevent repeat offenders from immediately returning after bans and stop known malicious websites from being shared. Brands using Social Media Moderation often maintain custom block lists tailored to their specific communities, blocking competitors, trolls, or terms inappropriate for their audience.

Temporary Restrictions and Suspensions

Temporary restrictions limit account functionality for specific periods, serving as warnings for first-time or minor violations. Users might lose commenting privileges for 24 hours, posting abilities for a week, or messaging capabilities for 30 days, depending on violation severity.

This graduated approach allows platforms to signal that behavior crossed lines without permanent account termination. Temporary restrictions effectively modify behavior, as users often adjust their conduct to avoid escalating to permanent bans.

Permanent Account Bans

Permanent bans remove accounts entirely for severe or repeated violations. Banned users lose access to their profiles, content, followers, and any platform-specific features or purchases.

Platforms typically reserve permanent bans for serious violations, including child exploitation, terrorism, credible threats of violence, or persistent patterns of harassment despite warnings. The finality of permanent bans makes them powerful deterrents, though determined bad actors may attempt to create new accounts to evade these restrictions.

IP Banning

IP banning blocks entire internet addresses rather than just individual accounts, preventing banned users from creating new accounts from the same network location.

When platforms detect that a banned user is creating multiple accounts to evade restrictions, IP bans raise the barrier by requiring the user to change their network connection.

However, IP bans have limitations as many users share IP addresses through internet service providers, and sophisticated bad actors can use VPNs or proxy servers to circumvent IP restrictions. Despite these limitations, IP bans remain useful for stopping casual ban evasion.

Shadow Banning

Shadow banning, also called stealth banning, restricts account visibility without notifying the user. Shadow-banned users can continue posting and interacting, but their content does not appear to other users, recommendations, or search results.

From the banned user’s perspective, everything appears normal, making it difficult to detect and evade the restriction. Platforms use shadow banning for spam accounts, bots, and users attempting to game algorithms, as notifying them would help them refine evasion tactics. However, shadow banning remains controversial as a lack of transparency can frustrate legitimate users who unknowingly trigger false positives.

Common Challenges in Social Media Moderation

Despite sophisticated technologies, Social Media Moderation Services face persistent challenges. The volume and scale problem represents the most fundamental challenge.

As noted by Tarleton Gillespie in his research published by Logic Magazine[7], the scale is simply unfathomable, with social media platforms hosting unprecedented amounts of content requiring moderation at qualitatively different levels than anything previously imagined.

Cultural and linguistic complexity compounds the challenge of global moderation. Language varies significantly across cultures, making it difficult for global platforms to develop moderation systems that work uniformly in all countries.

Context and intent remain notoriously difficult to assess. According to Harvard Misinformation Review research on Intent in Algorithmic Moderation, automated systems struggle to differentiate between literal and figurative language. The subjectivity problem means there will be as many opinions as there are people on what constitutes harmful content.

Adversarial behavior and evasion tactics present an ongoing arms race. Analysis on Moderation Circumvention, bad actors manipulate spelling through character variants, embed harmful text in images, and use coded language that fools automated detection while remaining obvious to human viewers.

The mental health toll on human moderators represents a severe challenge. Studies documented by J. Nathan Matias have found that exposure to disturbing content causes secondary trauma with symptoms similar to post-traumatic stress disorder.

Best Social Media Content Moderation Service Provider

Selecting the right moderation partner requires understanding which providers offer the capabilities, expertise, and reliability needed for your specific requirements. The market includes several established providers with proven track records across different industries and use cases.

- Comprehensive Full-Service Providers offer end-to-end moderation solutions combining advanced AI technology with human expertise across multiple languages and regions.

These providers typically support 50 or more languages with native-speaking moderators who understand cultural nuances beyond simple translation. They provide flexible pricing models based on hour, transaction, or volume, allowing businesses to transform fixed costs into variable costs that scale with actual usage.

Quality assurance processes at leading firms maintain scores of 98 percent or higher through systematic evaluation, structured audits, and continuous feedback loops.

Many offer industry-specific expertise, particularly in regulated sectors like healthcare, finance, and education, where compliance requirements demand specialized knowledge. Round-the-clock service delivery ensures continuous protection across time zones, critical for global platforms requiring 24/7 moderation coverage.

- Gaming and Entertainment Specialists bring specialized experience working with gaming platforms, esports organizations, and entertainment companies. These providers’ moderator workforces receive extensive training in brand voice, community management, and crisis response.

They excel in sectors where understanding subculture references, gaming terminology, and fan community dynamics proves essential for effective moderation.

Their expertise helps distinguish between acceptable trash talk within gaming communities versus genuine harassment or threats.

- Social Media Advertising Moderation Specialists focus on protecting paid campaigns from spam, negativity, and inappropriate user comments.

Research shows that specialized advertising moderation can help clients increase positive sentiment by over 100 percent through proactive services.

These providers particularly serve brands running high-volume advertising campaigns on Facebook, Instagram, and other platforms where ad comments require rapid moderation to protect marketing investments.

Companies using specialized advertising moderation typically report saving hundreds of hours monthly while managing thousands of comments per month, allowing marketing teams to focus on strategy rather than comment cleanup.

- Marketplace and E-commerce Specialists provide moderation services with a strong emphasis on classified ads, marketplace platforms, and user-generated content sites.

Their expertise in detecting fraudulent listings, counterfeit products, and scam attempts makes them valuable for e-commerce and marketplace businesses.

These providers’ proprietary technologies combine AI detection with human review optimized for transactional content moderation, protecting both buyers and sellers from fraud.

- Enterprise-Scale Integrated Providers offer content moderation as part of broader customer experience services, making them suitable for companies seeking integrated support solutions.

Their scale allows them to handle massive content volumes for large platforms while maintaining quality through structured training programs and quality assurance protocols.

These providers serve major technology companies and have developed specialized expertise in trust and safety operations, combining moderation with customer support, community management, and brand protection services.

When evaluating these providers, consider several factors beyond basic capabilities. Assess their experience in your specific industry, as healthcare platforms have different needs than gaming communities or e-commerce marketplaces. Verify their compliance expertise for regions you serve, particularly regarding GDPR, Digital Services Act, and local regulations. Examine their technology stack and AI capabilities, ensuring they use modern LLM-powered classifiers rather than outdated keyword-based systems.

Request detailed case studies from businesses similar to yours, focusing on metrics like accuracy rates, response times, and business impact. Discuss their moderator welfare practices, as providers investing in employee well-being typically maintain higher quality and lower turnover. Finally, ensure their reporting and transparency capabilities meet your compliance requirements and provide actionable insights for community management strategy.

Choosing the Right Social Media Moderation Service Provider

Selecting a Social Media Moderation Services provider represents a strategic decision impacting brand safety, user experience, compliance, and operational efficiency.

Begin by assessing your specific requirements and risk profile, considering the volume of content you need moderated, languages and regions you serve, sensitivity of your industry to reputational risk, and regulatory obligations you must meet.

Evaluate technical capabilities and AI sophistication. LLM-powered classifiers that interpret meaning rather than simply scanning for banned words now represent the industry standard. Examine human moderation capabilities and quality assurance, investigating how providers recruit, train, and support their human moderators.

Verify regulatory compliance expertise across relevant jurisdictions. For businesses serving multiple regions, providers need expertise across varying regulatory frameworks. Assess scalability and flexibility to accommodate growth and fluctuations.

Review transparency and reporting capabilities against your needs for insights and compliance documentation. Investigate pricing models and total cost of ownership. that best fits their purpose while transforming fixed costs into variable costs.

Consider specialized expertise for your industry or use case.

Frequently Asked Questions

What is the difference between automated and manual social media moderation?

Automated moderation uses artificial intelligence and machine learning to scan content based on predefined rules and patterns. Manual moderation involves trained human reviewers who evaluate content with contextual understanding.

According to Reddit transparency data analyzed by Research Nester, approximately 71 percent of removed content is handled by automated systems, while 29 percent requires manual moderation. Modern best practices use hybrid approaches combining both methods.

How quickly can moderation services review and remove harmful content?

Premium Social Media Moderation Services typically achieve review times under one second for automated decisions on clear violations. For content requiring human review, timeframes range from minutes to a few hours. The EU Digital Services Act mandates specific timeframes, typically requiring action within 24 hours for general violations and within one hour for terrorist content.

Can moderation services be customized to match my brand guidelines?

Yes, quality Social Media Moderation Services offer extensive customization, including developing custom moderation policies, training AI models on your historical decisions, creating brand-specific response templates, and setting custom thresholds for different content types. The degree of customization varies by provider, with more flexible services commanding premium pricing.

How much do social media moderation services typically cost?

Pricing varies widely based on content volume, customization requirements, language support, and service levels. Basic automated tools might start at a few hundred dollars monthly for small businesses, while enterprise services can reach hundreds of thousands of dollars annually.

DIGI-TEXX offers flexible pricing models based on hour, transaction, or volume, allowing clients to select the right model that best fits their purpose.

References

- Reddit.com. (2024). “Reddit – The heart of the internet”. Available at: https://www.reddit.com/r/RedditSafety/comments/1g54omb/reddit_transparency_report_janjun_2024/.

- Grand View Research (2024). “Content Moderation Services Market Size Report, 2030.” Available at: https://www.grandviewresearch.com/industry-analysis/content-moderation-services-market-report

- Expert Market Research (2025). “Content Moderation Solutions Market Size & Growth | 2035.” Available at: https://www.expertmarketresearch.com/reports/content-moderation-solutions-market

- Journal of Practical Ethics (2024). “The Ethics of Social Media: Why Content Moderation is a Moral Duty.” Available at: https://journals.publishing.umich.edu/jpe/article/id/6195/

- Harvard Kennedy School Misinformation Review (2025). “The unappreciated role of intent in algorithmic moderation of abusive content on social media.” Available at: https://misinforeview.hks.harvard.edu/article/the-unappreciated-role-of-intent-in-algorithmic-moderation-of-abusive-content-on-social-media/

- Library of Congress (2025). “Social Media: Content Dissemination and Moderation Practices.” Available at: https://www.congress.gov/crs-product/R46662

- Logic Magazine (2019). “The Scale Is Just Unfathomable – Interview with Tarleton Gillespie.” Available at: https://logicmag.io/scale/the-scale-is-just-unfathomable/

- BrandBastion (2026). “Social Media Moderation Software | Comment Moderation.” Available at: https://www.brandbastion.com/social-media-moderation

- Whitler, K.A. (2019). Why Word Of Mouth Marketing Is The Most Important Social Media. Forbes. [online] 9 Sep. Available at: https://www.forbes.com/sites/kimberlywhitler/2014/07/17/why-word-of-mouth-marketing-is-the-most-important-social-media/.

- Ralitsa Golemanova (2025). “The Future of Content Moderation: Trends for 2026 and Beyond. Imagga Blog”. Available at: https://imagga.com/blog/the-future-of-content-moderation-trends-for-2026-and-beyond/.

- Nash, B. (2025). “Social Search Statistics 2025: 98+ Stats & Insights [Expert Analysis] – Marketing LTB. Marketing LTB” . Available at: https://marketingltb.com/blog/statistics/social-search-statistics/.