Brand reputation takes decades to build but can be dismantled in seconds by a single unchecked scam or viral hate speech incident on your platform. E-commerce Content Moderation provides the essential governance layer required to mitigate these existential risks.

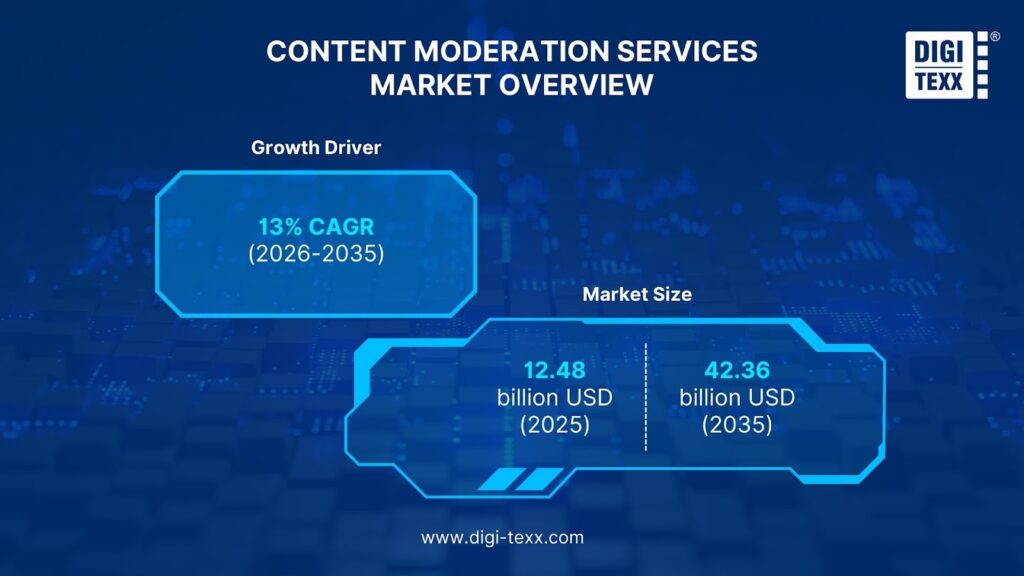

The content moderation services market is exploding, projected to reach a staggering $42.36 billion by 2035[1]. Businesses across the globe, from niche Shopify stores to massive multi-vendor marketplaces, are realizing that moderation is not just a cost center, it’s a growth engine. To stay competitive, implementing a professional E-commerce Content Moderation strategy is now a prerequisite for any digital storefront.

With this article, we delve into the intricacies of E-commerce Content Moderation, demystifying its mechanisms and providing a roadmap for implementation. We will explore the different models, the “Gold Standard” hybrid approach, and the legal landscapes you must navigate. By the end, you’ll have a comprehensive understanding of how to protect your brand in the digital age.

What is E-commerce Content Moderation?

E-commerce Content Moderation is the process of monitoring, assessing, and managing user-generated content (UGC) to ensure it aligns with platform guidelines, legal regulations, and brand values. This allows your marketplace to remain a safe environment for transactions while protecting users from scams, harassment, and inappropriate material.

Definition and Core Purpose

At its core, moderation acts as a digital gatekeeper. It filters out harmful noise, be it fake reviews, counterfeit product listings, or toxic comments, so that the signal (genuine commerce and interaction) can thrive. It applies to text, images, videos, and even live streams.

Market Growth & Industry Trends

The industry is witnessing a paradigm shift. According to Gartner, by 2025, 50% of consumers will significantly limit their interactions with social media due to a perceived decay in quality and safety[2]. This “flight to safety” means consumers are looking for trusted platforms.

Furthermore, the market is growing at a CAGR of roughly 13%, driven by the sheer volume of digital content and stricter government regulations globally, making E-commerce Content Moderation a top priority worldwide by 2026.[1].

Why E-commerce Content Moderation Matters for Your Business?

Implementing a robust moderation strategy offers several benefits for platforms. Understanding the core value of E-commerce Content Moderation helps businesses allocate resources more effectively:

Protecting Brand Reputation and Trust

Business’s reputation takes years to build but seconds to destroy. A single viral instance of offensive content appearing next to a premium brand’s ad (Brand Safety) can lead to boycotts. Studies show that nearly half of consumers would defect from brands whose ads appear near offensive content. Moderation ensures your “digital shelf” is clean and professional.

- Example: In April 2023, Walmart faced a significant PR backlash after listing a seemingly innocent pro-recycling T-shirt on its marketplace. The shirt featured the slogan “RECYCLE, REUSE, RENEW, RETHINK” arranged vertically. The design inadvertently highlighted the first two letters of the first word (“RE”) in large font, followed by the remaining words (“cycle,” “use,” “new,” “think”) in smaller text.

However, when reading vertically, the first letter of each of the four words spelled out a highly offensive vulgarity (the “c-word”). This oversight went viral on social media, forcing Walmart to swiftly pull the product and issue a public apology for the “unintentional” error. This incident highlights how a lack of rigorous visual content moderation, even for something as simple as text layout on a shirt, can lead to embarrassment and reputational damage for a retail giant.

Reducing Legal and Regulatory Compliance Risks

Global regulations have shifted from optional guidelines to mandatory laws, making platforms strictly liable for what appears on their sites. The financial impact of non-compliance is no longer theoretical; it is quantified and severe. Therefore, effective E-commerce Content Moderation acts as a legal shield against massive fines under the DSA or INFORM Act.

- The INFORM Consumers Act (USA): This legislation targets organized retail crime and the sale of stolen goods by forcing online marketplaces to verify high-volume sellers. A “high-volume third-party seller” is legally defined as a vendor who completes 200 or more discrete transactions and generates $5,000 or more in gross revenue within any continuous 12-month period. Once a seller hits this threshold, the platform has only 10 days to collect and verify their bank account information, tax ID, and contact details.

Failure to comply results in civil penalties of up to $53,088 per violation. The operational reality of this law was demonstrated in 2025 when the Federal Trade Commission (FTC) reached a settlement requiring Temu to pay a $2 million civil penalty for failing to provide clear reporting mechanisms for consumers. Additionally, major platforms like Amazon have had to suspend approximately 40,000 seller accounts temporarily to enforce these verification checks, illustrating the massive operational disruption required to remain compliant.

- Digital Services Act (EU): The DSA establishes a comprehensive set of rules for intermediary services in the European Union, focusing on user rights and illegal content. It mandates a strict “Notice and Action” mechanism where platforms must enable users to flag illegal content easily and then process those reports promptly.

The penalties here are even more severe than in the US, with fines reaching up to 6% of a company’s total worldwide annual turnover. In December 2025, the European Commission imposed a fine of €120 million on X (formerly Twitter) for deceptive design patterns regarding its verification system and lack of transparency. This sets a precedent that the EU will aggressively punish platforms that fail to meet safety and transparency standards.

Improving Customer Safety and User Experience

Safety is a core product feature. By filtering out scams, phishing links, and explicit material, platforms reduce friction in the buying journey. For instance, Etsy enforces strict policies against prohibited items to ensure buyers feel safe purchasing unique goods. A safe environment encourages users to explore and transact without fear, directly impacting conversion rates.

Or Amazon’s Project Zero initiative is a prime example of how proactive moderation improves safety and experience. By empowering brands with a self-service tool to remove counterfeit listings instantly, Amazon removed over 99% of suspected counterfeits proactively before a customer ever saw them.

This significantly reduced the friction of customers receiving fake goods, filing complaints, and losing trust in the platform. When shoppers know that the e-commerce content moderation system is working in the background, it automatically scans over 5 billion product listing updates daily, creating a safer ecosystem where customers can shop with confidence and their lifetime value increases.

Increasing Platform Engagement and User Retention

Users return to places where they feel heard and safe. A toxic comment section drives users away. Conversely, a healthy community fosters engagement. High-quality, moderated reviews help customers make better decisions, reducing return rates and increasing satisfaction.

- Example: Content moderation is the invisible backbone of effective recommendation engines. By ensuring that product metadata (titles, descriptions, tags) is accurate and free from keyword stuffing or misleading categorization, algorithms can generate high-quality “Frequently Bought Together” or “You May Also Like” suggestions.

Without moderation, a user viewing a smartphone might be recommended an incompatible accessory or a scam product disguised as a legitimate add-on. Clean data inputs, secured by moderation, lead to relevant, high-converting product bundles that increase Average Order Value (AOV) and user trust in the platform’s intelligence.

Prevent Fraud, Scams, and Fake Reviews

Fake reviews, often referred to as review bombing or farming, mislead customers and inflate return rates. Platforms like TripAdvisor have had to block over 1 million fake reviews in a single year to maintain ecosystem integrity[4]. Moderation tools detect patterns, such as identical IP addresses or burst posting, to strip these out before they damage consumer confidence.

Without strict moderation, the comment and review sections of e-commerce platforms can quickly descend into chaos, becoming vectors for harassment and illicit content distribution. A notable example occurred on Shopee, where users exploited the review section (which allows image and video uploads) to post explicit 18+ content and advertisements for illegal services disguised as product feedback.

This not only violated platform policies but also exposed regular shoppers, including minors, to harmful material, forcing the platform to implement stricter media filtering and account bans to regain control. This illustrates that moderation is not just about opinion policing; it is about maintaining basic public order in a digital marketplace.

Maximize SEO Performance and Content Quality

For platform owners, E-commerce content moderation is a critical technical necessity to protect search visibility. Google’s algorithms are engineered to detect and penalize sites that fail to police “user-generated spam,” which typically manifests as auto-generated gibberish or malicious links to gambling and adult sites embedded in comment sections.

If Google detects that a platform has lost control of its content quality, it may issue a Manual Action. This is a severe penalty where a human reviewer at Google manually removes pages or the entire website from search results, effectively cutting off organic traffic and revenue overnight.

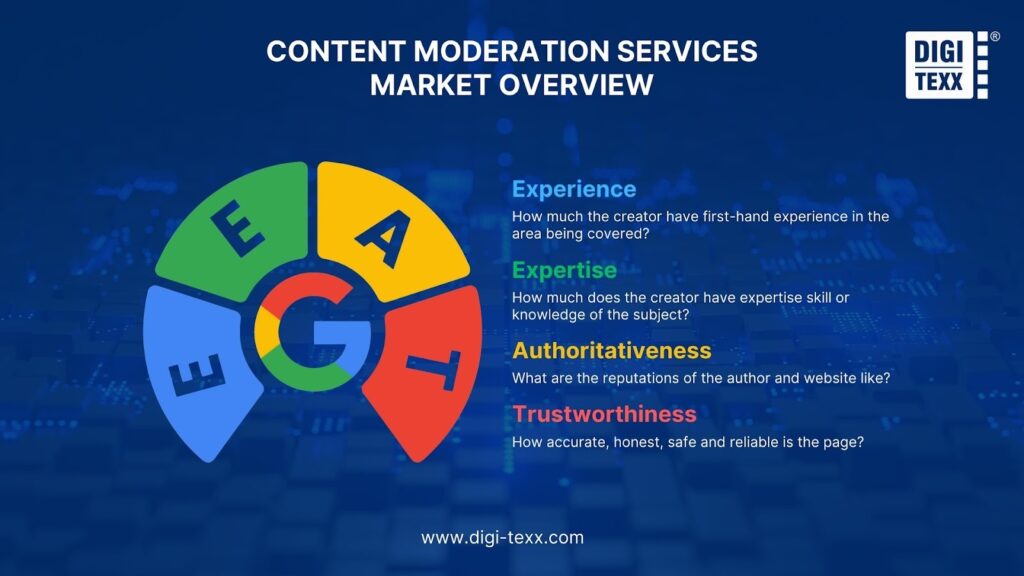

Furthermore, high-quality moderation directly supports Google’s E-E-A-T (Experience, Expertise, Authoritativeness, Trust) framework, which is used to evaluate page quality. Among these factors, trust is the most critical component for e-commerce. A product page featuring authentic, detailed, and moderated reviews signals high Trust to search engines, indicating a legitimate business.

Conversely, a page filled with spammy, duplicate, or clearly fake reviews destroys that Trust signal. When Google’s algorithms perceive a lack of trust, they demote the site’s rankings, causing it to lose visibility to competitors who maintain cleaner, more trustworthy content environments.

Gain Consumer Insights and Market Intelligence

Moderators are the first to hear customer complaints. Analyzing rejected content and user reports provides a goldmine of data regarding what users hate, what scams are trending, and where your product UI might be failing.

Types of E-commerce Content Moderation Models

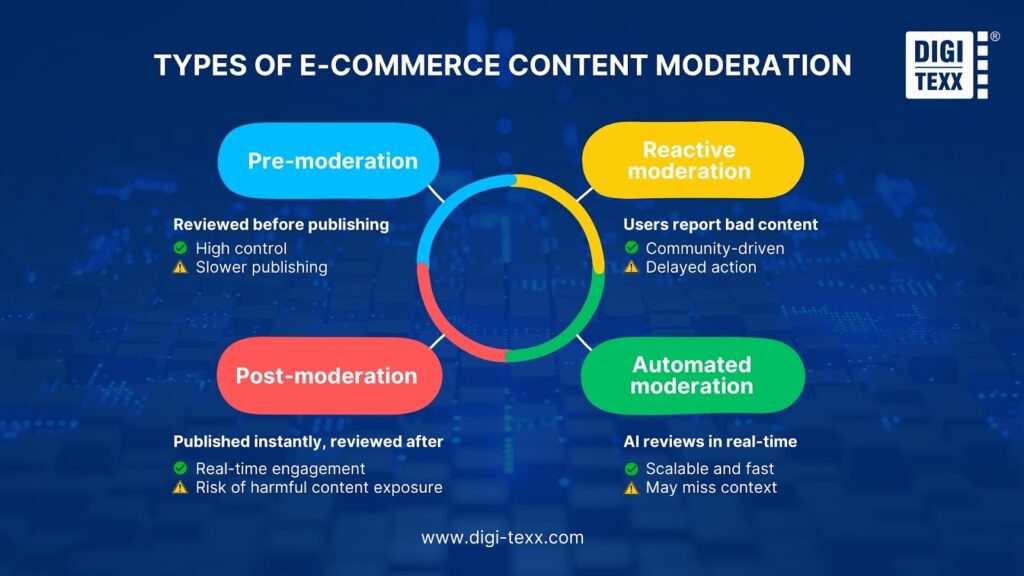

Selecting the right moderation model is a strategic decision involving a critical trade-off between User Experience (Speed) and Brand Safety (Risk). Most platforms evolve from one model to another as they scale, and understanding the nuances of each is vital for operational success.

Pre-moderation (Proactive Screening)

Pre-moderation is a process where all user-generated content (UGC) is intercepted and placed in a holding queue. It must be explicitly reviewed and approved by a human moderator or an automated system before it becomes visible to the public on the platform.

This method provides the highest level of control and safety, ensuring that harmful or illegal content never reaches the live site. However, it introduces significant latency (delay), as content display depends on the speed of the moderation queue. This can create operational bottlenecks during peak traffic times.

Ideal Industry Use Cases:

- Print-on-Demand & Customization: Platforms allowing users to upload images for printing (e.g., custom t-shirts) use this to prevent copyright infringement and the production of offensive material.

- Children’s Platforms & EdTech: Services targeting minors (COPPA compliance) require strict pre-screening to ensure absolute safety.

- Luxury Resale: Marketplaces for high-value goods often pre-moderate listings to verify authenticity and quality before they go live.

Post-moderation (Reactive Review)

Post-moderation allows content to be published immediately upon submission. The review process by moderators or AI systems occurs subsequently, often prioritizing content based on views or virality.

The primary advantage is instant gratification, which facilitates real-time conversation and higher user engagement rates. The critical disadvantage is the “Risk Window”, the duration between publication and removal, during which harmful content (scams, hate speech) is visible to users and can be captured or shared, potentially damaging brand reputation.

Ideal Industry Use Cases:

- Fast Fashion & High-Volume Retail: Platforms receiving thousands of product reviews daily often use this to keep the user experience seamless.

- Live Commerce & Streaming: Chat comments must appear instantly to maintain the flow of the broadcast; delays would disrupt the sales momentum.

- Community Forums: Environments where peer-to-peer interaction speed is the primary value proposition.

Automated Moderation (AI-Driven)

Automated moderation utilizes artificial intelligence technologies, specifically Machine Learning (ML), Natural Language Processing (NLP), and Computer Vision, to detect and filter content based on pre-defined rules and learned patterns without human intervention.

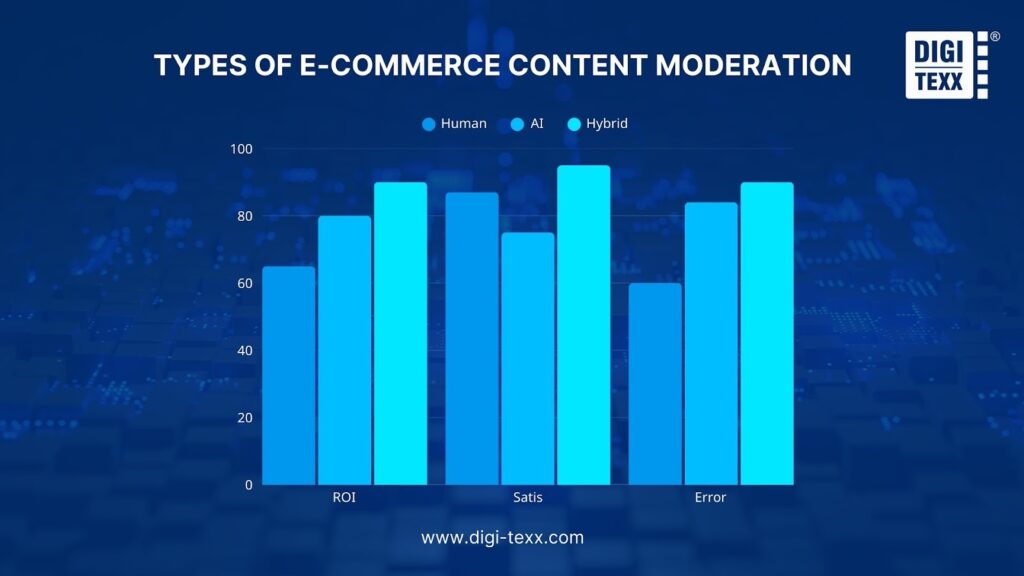

This model offers unmatched scalability and cost-efficiency. AI can process millions of data points 24/7 at a fraction of the cost of human teams (approx. $0.0035 per unit vs $0.04 for manual review ). However, AI systems often struggle with context, nuance, and sarcasm, leading to “False Positives” (blocking legitimate content) or “False Negatives” (missing sophisticated violations).

Ideal Industry Use Cases:

- Spam & Bot Filtering: The first line of defense to block automated links to gambling or adult sites.

- Explicit Content Detection: Instant blocking of nudity or graphic violence using image hashing technologies.

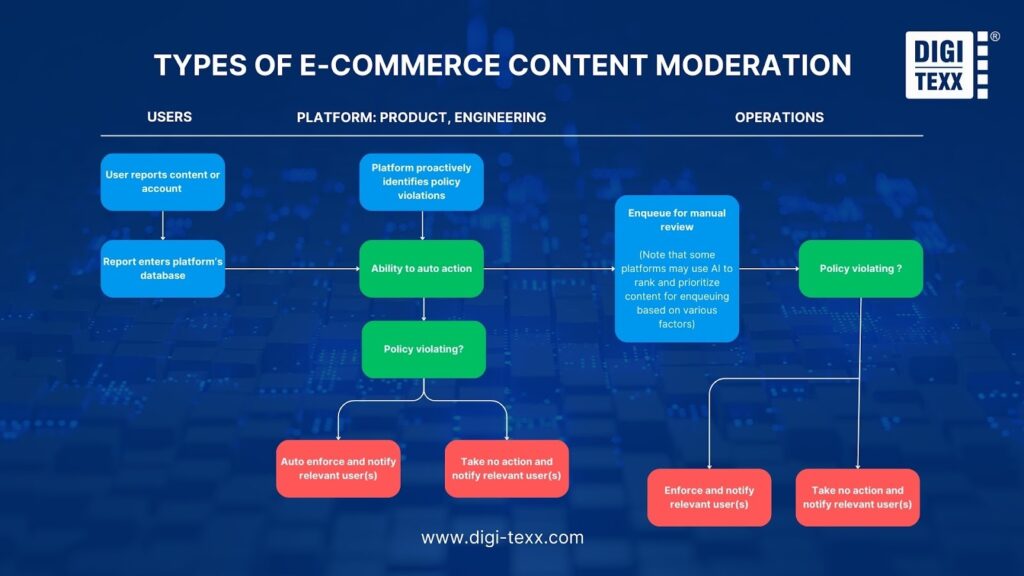

Reactive Moderation (User-Initiated)

Reactive moderation relies on the platform’s community to identify and “Flag” or “Report” content that violates guidelines. Moderators review content only after it has been brought to their attention by a user.

This approach is cost-effective as it distributes the monitoring workload to the user base. However, it is inherently slow and implies that at least one user must be exposed to the harmful content to report it. Furthermore, it is vulnerable to “Brigading”, a tactic where bad actors or competitors mass-report legitimate listings or reviews to trigger unwarranted takedowns.

Ideal Industry Use Cases:

- C2C Marketplaces: Platforms facilitating direct user-to-user transactions (e.g., classifieds) often rely on this for policing non-compliant listings.

- Deep Comment Threads: Secondary content layers where proactive monitoring may not be resource-efficient.

Hybrid Moderation (Strategic Combination)

Hybrid moderation is an orchestrated workflow that integrates automated screening with human expertise. It typically operates on a “Confidence Score” logic: AI scans 100% of content and automatically handles clear-cut cases (very high or very low risk), while routing ambiguous content (the “Grey Area”) to human moderators for adjudication.

Widely regarded as the industry standard, this model optimizes the trade-off between cost, speed, and quality. Crucially, the decisions made by human moderators are fed back into the system to retrain the AI models, creating a continuous improvement loop.

Ideal Industry Use Cases:

- Enterprise E-commerce & Marketplaces: Essential for platforms that must balance massive scale with strict regulatory compliance (e.g., INFORM Consumers Act, DSA).

How Hybrid Moderation Works: The Industry Standard

It is crucial to understand that Hybrid Moderation is not merely the simultaneous use of AI and human moderators operating in silos. Rather, it is a highly integrated, sequential workflow where both elements are indispensable.

The AI provides the speed and scale necessary to handle modern data volumes, while human experts provide the critical judgment required for complex, nuanced decisions. This synergistic combination allows businesses to achieve the “best of both worlds”: the cost-efficiency of automation and the high-quality assurance of manual review.

The workflow typically operates on a logic based on Confidence Scores (a numerical value indicating how certain the AI is about its decision) to seamlessly route content between machines and humans.

AI Handles Routine, High-Volume Content

The process begins with Artificial Intelligence acting as the primary gatekeeper. The AI system scans 100% of incoming content (text, images, video, and audio) in real-time. It analyzes this content against a set of trained models to detect violations such as nudity, hate speech, spam, or counterfeit logos.

If the AI assigns a very high confidence score to its analysis (for example, above 95%), it takes immediate action without human intervention. Clear-cut violations are automatically deleted or blocked, while content identified as clearly safe is automatically published. This automated layer typically resolves 90% to 95% of the total content volume, ensuring that users experience minimal delay and that the platform is not overwhelmed by operational costs.

Humans Review Complex Cases

While AI is efficient, it often lacks the ability to understand context, sarcasm, slang, or cultural nuances. Content that the AI cannot classify with absolute certainty falls into what is known as the “Grey Area” (typically with a confidence score between 60% and 90%).

This ambiguous content is automatically routed to a queue for human moderators. Human experts then review these specific items to make the final determination. For instance, an AI might flag the phrase “This knife is a killer!” as a violent threat. A human moderator, seeing the context of a 5-star review for a kitchen chef’s knife, would correctly interpret this as a compliment and approve the content. This step ensures that legitimate users are not unfairly penalized by rigid algorithms.

Continuous Learning Loop

The final and most transformative aspect of Hybrid Moderation is the feedback mechanism known as the “Human-in-the-Loop.” The system does not remain static; it evolves. Every decision made by a human moderator, particularly when they overturn an initial flag by the AI, is fed back into the system as a new data point.

For example, if human moderators consistently approve a specific slang term that the AI had been flagging as offensive, the AI model is retrained with this new data. Over time, the algorithm learns to recognize this nuance, reducing the number of “False Positives” and shrinking the “Grey Area.” This continuous cycle improves the AI’s accuracy, progressively reducing the manual workload and cost for the business while maintaining high safety standards.

Best Practices for E-commerce Content Moderation

To implement this effectively, businesses should follow a structured roadmap:

Establish Clear and Comprehensive Moderation Guidelines

You cannot enforce rules that don’t exist. Create a clear Acceptable Use Policy (AUP) and Community Guidelines. Be specific about what constitutes “hate speech,” “counterfeit,” or “manipulated reviews”.

Implement Multi-layered Moderation Strategy

Relying on a single mechanism for safety creates a single point of failure. A robust strategy requires a “Defense in Depth” architecture, deploying multiple overlapping layers of protection to ensure that if one filter fails, another catches the violation.

Layer 1: Technical SEO & Infrastructure Controls

The first layer of defense occurs at the infrastructure level, primarily protecting the platform’s relationship with search engines. Implementing the rel=”ugc” attribute on all user-generated links is mandatory.

This technical tag signals to Google that the platform does not necessarily endorse the linked content, thereby quarantining the site’s domain authority from potential penalties associated with “link spam” or malicious outbound links. By effectively neutralizing the SEO value of spam links, platforms also disincentivize spammers from targeting them in the first place.

Layer 2: Automated Pre-screening & Filtering

The second layer involves high-speed automated systems designed to clear the “low-hanging fruit” before content ever reaches a human eye. This includes Regex (Regular Expressions) filters to instantly block patterns like phone numbers or email addresses, preventing platform leakage (where transactions move off-site).

For visual content, Perceptual Hashing technology creates a unique digital fingerprint for images. This allows the system to instantly recognize and block known bad media, such as previously identified terrorist imagery or counterfeit product logos, even if the image has been resized or slightly altered. This layer is crucial for cost control, as it handles the vast majority of volume instantly.

Layer 3: Human Oversight & Escalation

The final layer is the “safety net” reserved for high-stakes decisions. Human moderation teams are essential for handling “Grey Area” content where context is king, such as distinguishing between a hate speech attack and a historical discussion.

Furthermore, humans manage the appeals process, providing a necessary recourse for users who feel they were wrongly flagged. This human element ensures fairness and protects brand reputation in complex scenarios that AI cannot yet navigate safely.

Invest in Training and Continuous Calibration

Moderators need regular training, especially on cultural nuances and new slang. Calibration sessions, where moderators review the same content to ensure they agree on the decision, are vital for consistency. Also, prioritize Moderator Wellness. Exposure to toxic content causes burnout; implement programs for psychological support.

Monitor and Measure Moderation Metrics

In the data-driven landscape of 2025, measuring content moderation performance requires shifting focus from simple operational throughput to strategic business impact. To achieve this, platform owners must monitor a comprehensive suite of metrics across enforcement volume, decision quality, operational efficiency, and fairness.

Enforcement Volume and Capacity Planning

The foundation of any moderation strategy lies in understanding the complete lifecycle of a moderation ticket through three interconnected metrics, which are Incoming, Closes, and Actions. First, Incoming Volume captures the total demand placed on the system, including content flagged by user reports and proactive AI detection. Monitoring this helps anticipate seasonal spikes or detect coordinated spam attacks. Second, Closes represents the system’s throughput capacity.

It counts the total number of decisions made, regardless of whether the content was removed or kept. Tracking Closes is essential for understanding the team’s productivity and managing the backlog. Third, Actions tracks the number of decisions that resulted in a penalty, such as content removal or account suspension. By comparing Actions against Closes, businesses calculate the Action Rate. A low Action Rate suggests that detection filters are too aggressive, whereas a high Action Rate indicates efficient targeting of actual violations.

Operational Efficiency and Velocity

Speed is a critical factor in mitigating harm and is measured primarily through Time to Action (also known as Velocity). This metric tracks the duration between the moment a piece of harmful content is created and the moment a final decision is rendered. In e-commerce, minimizing Time to Action is vital because a counterfeit listing or a scam review can inflict significant reputational damage for every minute it remains visible.

Related to this is Review Time, which measures the specific amount of time a human moderator spends evaluating a single unit of content. While reducing Review Time improves throughput, pushing this metric too low can lead to decision fatigue and errors.

Decision Quality: Precision and Recall

The most critical metrics for assessing the health of a moderation system are Precision and Recall, as they directly impact the bottom line. Precision measures the accuracy of enforcement by calculating the percentage of flagged content that was truly a violation. High precision is essential for Revenue Protection because it minimizes “False Positives,” where legitimate sellers or products are incorrectly banned.

Research indicates that reducing false positives by just 15% can lead to a 3% increase in user retention. Conversely, Recall measures the effectiveness of the safety net by calculating the percentage of actual violations that were successfully caught. High recall is necessary to minimize “False Negatives,” ensuring that illegal goods do not slip through. A failure in recall exposes the platform to severe legal risks under regulations like the DSA and the INFORM Consumers Act.

Fairness and Appeal Health

To ensure the system remains fair, it is essential to monitor the Overturn Rate and Appeals Volume. The Overturn Rate represents the percentage of moderation decisions that are reversed upon appeal. A high Overturn Rate is a red flag indicating that the initial decision-making process is flawed. Additionally, tracking Time to Resolution for appeals is crucial for seller satisfaction. If a legitimate merchant is suspended by mistake, the speed at which their status is restored directly correlates with their lost revenue and loyalty.

Cost Efficiency and Strategic Impact

Beyond operational metrics, businesses must measure the Cost per Moderated Item to calculate the ROI of their trust and safety investments. This metric justifies the shift from manual review to hybrid AI models. Furthermore, advanced platforms track Prevalence, which estimates the percentage of violative content that users actually see on the platform. Prevalence is often considered the gold standard metric because it reflects the true safety of the user experience regardless of how many items were removed.

Sustainability and Moderator Wellness

Finally, operational sustainability cannot be decoupled from Moderator Wellness. Strategic KPIs for 2025 now include indicators of workforce health, such as Moderator Churn Rate and Wellness Scores. Protecting the mental health of the team preserves institutional knowledge and maintains high decision quality. High churn rates often lead to a loss of experienced staff, which in turn degrades accuracy and increases the risk of costly errors.

Ensure Transparency in Moderation Decisions

Users deserve to know why their content was removed. Providing a “Statement of Reasons” helps educate users and reduces repeat violations. This is also a requirement under the EU DSA.

Case Study: Amazon Project Zero

Amazon Project Zero revolutionized counterfeit detection by moving from a reactive to a proactive model. Amazon implemented automated protections that scan over 5 billion updates daily and empowered brands with a self-service tool to remove counterfeits themselves. They also introduced product serialization, giving each unit a unique code. The result was that over 99% of counterfeits were blocked proactively before a customer ever saw them, illustrating the power of a tech-driven strategy.[7]

The Strategic Imperative: Turning Safety into Competitive Advantage

In the current digital landscape, content moderation has transcended its traditional role as a back-office support function to become a cornerstone of corporate strategy. The convergence of strict regulatory frameworks like the INFORM Consumers Act and the DSA, combined with the algorithmic demands of search engines for high-Trust signals, means that platform integrity is now directly linked to financial performance and market valuation.

Marketplaces and e-commerce platforms that view moderation solely as a cost center risk not only severe legal penalties but also the rapid erosion of their most valuable asset: consumer confidence. Conversely, organizations that invest in a sophisticated, hybrid moderation infrastructure position themselves to capitalize on the “flight to safety.”

By prioritizing a clean, transparent, and compliant ecosystem, businesses do more than simply avoid fines. They build a resilient brand fortress that attracts high-quality sellers, retains loyal customers, and secures sustainable growth in an increasingly volatile digital economy. The choice is clear: evolve safety infrastructure today to secure market leadership tomorrow. Mastering E-commerce Content Moderation is the ultimate key to long-term digital sovereignty. The choice is clear: evolve safety infrastructure today to secure market leadership tomorrow. Mastering E-commerce Content Moderation is the ultimate key to long-term digital sovereignty.

You might also like:

- AI vs Human Content Moderation Solutions: Which Approach Works Best for E-commerce in 2025?

- Outsourced Content Moderation Services: The Key to Safer Digital Communities

Frequently Asked Questions:

Q1: Is the trend of decentralized moderation suitable for B2B e-commerce?

Currently, no. While Web3 and decentralized platforms are experimenting with empowering the community to vote on moderation, this model lacks the consistency and accountability necessary to comply with strict regulations like the INFORM Act or DSA. B2B businesses need centralized control to ensure identity verification and transaction security. However, the community can be used as an additional “reporting layer”.

Q2: Can generative AI (GenAI) completely replace humans in moderation?

In the near future (3-5 years), the answer is no. Although GenAI is very powerful, it still suffers from “illusion” errors and lacks empathy in sensitive situations (e.g., distinguishing between artistic and pornographic images, or between jokes and threats). Humans are still necessary for the “Human-in-the-loop” process to handle gray-area cases and train AI.

Q3: How does deepfake affect e-commerce platforms, and what are the solutions?

Deepfake directly threatens trust. Scammers use deepfake celebrities to advertise counterfeit goods (Scam Ads). The solution is to integrate Deepfake Detection technology into the ad and video review process. Additionally, content credentials should be required for large advertisers.

Q4: How does Google handle AI-generated content in terms of SEO?

Google doesn’t ban AI content, but evaluates it based on the E-E-A-T standard. If the AI content is useful, it will still be ranked. However, mass-produced AI content designed to manipulate keywords (mass-produced spam) will be detected and punished by the SpamBrain system. Businesses should focus on using human editors to refine AI content to ensure authenticity and value.

Q5: Why is analyzing “Link Velocity” important in spam moderation?

Link Velocity is an early indicator of spam attacks. If a product suddenly receives thousands of comments containing links in a short period, it is often a sign of a botnet. Moderation systems need to monitor this metric to activate lockdown mode in a timely manner, preventing damage to the website’s backlink profile.

References

- Akshay Pardeshi (2025). Content Moderation Services Market Size, Trends & Forecast to 2035. [online] Research Nester. Available at: https://www.researchnester.com/reports/content-moderation-services-market/7630 [Accessed 16 Jan. 2026].

- Amazon Web Services. (2025). Streamlining content compliance: Automating media analysis with Amazon Nova | Amazon Web Services. [online] Available at: https://aws.amazon.com/vi/blogs/media/streamlining-content-compliance-automating-media-analysis-with-amazon-nova/ [Accessed 16 Jan. 2026].

- Gartner (2023). Gartner Predicts 50% of Consumers Will Significantly Limit Their Interactions with Social Media by 2025. [online] Gartner. Available at: https://www.gartner.com/en/newsroom/press-releases/2023-12-14-gartner-predicts-fifty-percent-of-consumers-will-significantly-limit-their-interactions-with-social-media-by-2025.

- Outharm (2026). Outharm Content Moderation API. [online] Outharm. Available at: https://outharm.com/docs/billing/plans [Accessed 16 Jan. 2026].

- Salesduo.com. (2025). How Amazon Project Zero Fights Counterfeits in 2025. [online] Available at: https://salesduo.com/blog/amazon-project-zero/.

- Shopify Help Center. (2023). Understanding the INFORM Consumers Act. [online] Available at: https://help.shopify.com/en/manual/online-sales-channels/shop/eligibility/inform.

- TripAdvisor. (2019). TripAdvisor Releases Data Detailing Fake Review Volumes In First-Of-Its-Kind Transparency Report | TripAdvisor. [online] Available at: https://ir.tripadvisor.com/news-releases/news-release-details/tripadvisor-releases-data-detailing-fake-review-volumes-first [Accessed 16 Jan. 2026].