The digital landscape in 2026 presents unprecedented challenges for platform owners managing user-generated content. Learning how to moderate user-generated content effectively has become essential for business survival, user safety, and regulatory compliance.

Every second, thousands of users worldwide submit posts, comments, images, and videos across digital platforms, creating a constant stream of content that requires careful oversight.

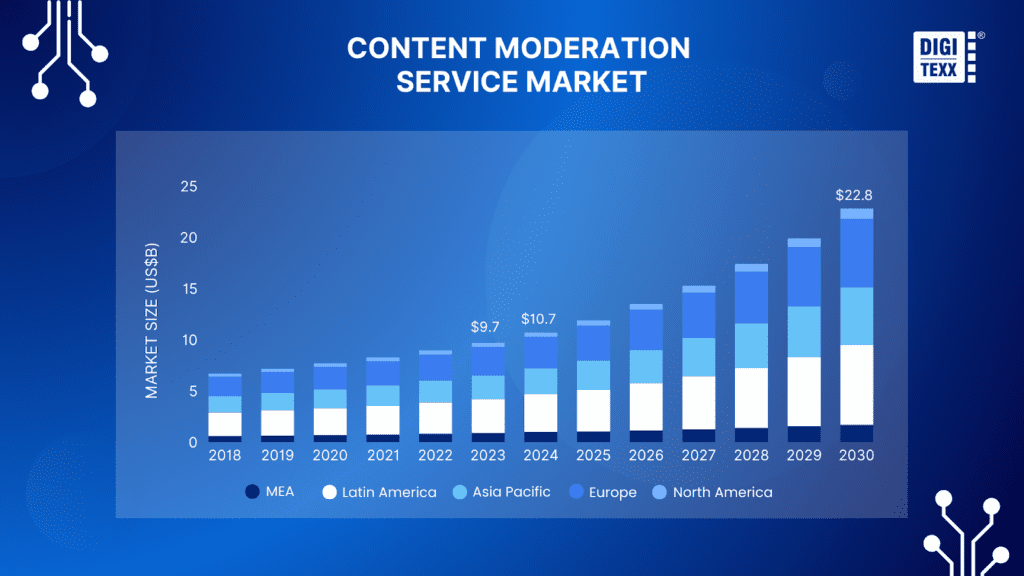

According to the Content Moderation Services Market Report by Grand View Research, the global market reached $9.67 billion in 2023 and projects explosive growth to $22.78 billion by 2030, registering approximately 13.4 percent CAGR through this period[4]. This remarkable expansion underscores how critical understanding how to moderate user-generated content has become for digital businesses worldwide.

The stakes for proper moderation extend far beyond market growth figures. In May 2024 alone, Meta removed over 21 million pieces of harmful content from Facebook and Instagram in India, demonstrating the massive scale at which platforms must operate to maintain safe environments[1].

Studies show that 79 percent of consumers report that user-generated content highly impacts their purchasing decisions[2], highlighting how moderation quality directly affects business outcomes. When users encounter toxic content like harassment, scams, or misinformation, they lose trust rapidly and disengage from platforms permanently.

What is User-Generated Content (UGC) Moderation?

User-generated content moderation represents a systematic, multi-layered process of reviewing, filtering, and managing all content that users submit to digital platforms. This encompasses text comments, product reviews, forum discussions, social media posts, uploaded images and videos, audio recordings, and live streams shared across social media networks, e-commerce marketplaces, dating applications, gaming communities, and educational platforms.

The fundamental objective behind implementing effective strategies for how to moderate user-generated content centers on creating and maintaining digital environments where users can engage safely without encountering harmful, offensive, illegal, or policy-violating material.

Moderation systems evaluate each piece of submitted content against multiple assessment criteria, including community guidelines, legal requirements, brand safety standards, and cultural appropriateness considerations for diverse global audiences.

According to the Mordor Intelligence Content Moderation Market Analysis, the market reached $11.63 billion in 2025 and forecasts growth to $26.09 billion by 2031, with a compound annual growth rate of 14.42 percent driven by steep rises in user-generated content volumes and increasingly demanding regulatory frameworks like the EU Digital Services Act and UK Online Safety Act[5].

This regulatory pressure has fundamentally transformed how platforms approach moderation, shifting from purely reactive takedown models to continuous proactive risk assessment regimes.

Modern content moderation operates through multiple decision pathways. Human moderators bring essential cultural context, nuanced judgment, and ethical reasoning to complex cases. Artificial intelligence systems provide rapid first-pass filtering, analyzing millions of content items per hour to identify clear violations or approve obviously acceptable material.

Hybrid approaches combine both methodologies, routing straightforward cases through automated processing while escalating ambiguous or high-risk content to human review teams.

Why UGC Moderation Matters

The consequences of inadequate moderation strategies extend across multiple dimensions, affecting platform viability, user well-being, legal compliance, and business sustainability. Platforms that implement proactive moderation methods can reduce disruptive user behavior and achieve significantly higher member satisfaction rates compared to platforms that rely solely on reaction reporting systems.

From a business perspective, moderation failures trigger devastating cascading effects. A single piece of harmful content going viral can generate millions in damage control expenses and permanently tarnish brand credibility.

When 140 Facebook moderators in Kenya sued Meta and Samasource in December 2024 after receiving diagnoses of severe PTSD linked to graphic content exposure[5], the case highlighted potential legal and reputational costs when platforms inadequately support their content review teams.

Legal implications add urgent complexity to moderation requirements. The European Union’s Digital Services Act, which became fully enforceable in February 2024, obligates Very Large Online Platforms to conduct yearly risk audits and face fines reaching up to 6 percent of worldwide turnover for non-compliance[5].

According to industry projections, global cybercrime losses are expected to reach $10.5 trillion annually by 2025, representing a staggering increase from $3 trillion in 2015[1].

Studies tracking user behavior demonstrate that 72 percent of users report being more likely to participate actively in online communities where they feel safe and supported by strong moderation practices[1].

This correlation between moderation quality and user engagement creates a virtuous cycle where better moderation leads to higher quality user contributions, which attract more engaged participants, which further improves overall community health.

Types of User-Generated Content to Monitor

Understanding the diverse content types appearing on your platform constitutes the essential foundation for building effective, comprehensive moderation systems. Each format presents unique technical challenges and requires specialized detection tools and approaches for optimal management.

Text Comments and Reviews

Text represents the most prevalent form of user-generated content across virtually all digital platforms. The challenge with text moderation extends profoundly beyond simple keyword detection. Sophisticated users routinely employ creative spelling variations, coded language using euphemisms, creative emoji combinations, intentional misspellings designed to evade automated filters, and cultural references carrying harmful connotations that vary across regions.

Natural language processing (NLP) technology has advanced remarkably, but even state-of-the-art artificial intelligence systems continue struggling with context-dependent interpretation.

A phrase that constitutes clear harassment in one conversational context might represent friendly banter between established friends in another context. Sarcasm, satire, and humor add additional layers of interpretive complexity that automated systems frequently misinterpret.

Advanced modern text moderation systems incorporate sentiment analysis, entity recognition to identify protected individuals or groups being targeted, and behavioral pattern detection to flag accounts engaged in coordinated harassment campaigns.

Images and Videos

Visual content moderation presents fundamentally different technical challenges compared to text analysis. Images and videos can contain explicit sexual content, graphic violence, hate symbols, dangerous stunts, illegal items, copyrighted material, and personal information requiring protection.

YouTube alone sees over 500 hours of video uploaded every minute as of 2023[8], creating processing demands that only sophisticated automated systems can handle.

Modern AI models analyze pixel-level patterns, spatial relationships between detected objects, facial expressions, scene composition, and temporal patterns in video, identifying when concerning content appears.

However, visual AI still confronts significant limitations requiring human oversight. Context remains profoundly challenging because a medical education image showing surgical procedures appears visually similar to gore content, but serves entirely different purposes.

Video moderation adds substantial temporal complexity, with harmful content potentially appearing briefly within otherwise acceptable videos.

Social Media Posts

Social media platforms face uniquely challenging moderation requirements due to their real-time nature, demanding instant decisions, massive scale processing of billions of daily submissions, and complex multimedia format combinations.

A single post might contain text, multiple images, embedded video, tagged users, location data, and links to external content requiring separate evaluation.

Social platforms must moderate not just individual posts but entire networks of interactions, distinguishing organic user disagreement from organized abuse campaigns and genuine grassroots movements from coordinated amplification schemes.

E-commerce Product Content

E-commerce platforms face unique moderation challenges that directly impact marketplace integrity and consumer trust. Product listings, seller descriptions, customer reviews, and Q&A sections all require careful oversight to protect consumers from fraud, counterfeit goods, and misleading information.

Product listings submitted by sellers often contain violations ranging from prohibited items to deceptive marketing practices. Moderators must screen for counterfeit goods, which remain one of the most persistent challenges on e-commerce platforms.

Luxury brands particularly suffer from sophisticated counterfeiters who create convincing fake listings with stolen product images. Platforms must also identify listings for prohibited items such as weapons, illegal drugs, and stolen goods.

Misleading product information represents another critical challenge. Sellers sometimes exaggerate product capabilities or make false claims about certifications and materials.

For example, sellers might advertise jewelry as genuine gold when it is merely gold-plated, or claim electronics carry safety certifications they lack.

Customer reviews constitute particularly sensitive content because they directly influence purchasing decisions. Review manipulation has become increasingly sophisticated, with organized campaigns of fake positive reviews boosting fraudulent sellers or fake negative reviews attacking competitors.

Platforms like Amazon and eBay invest heavily in machine learning systems analyzing review patterns, verified purchase status, and linguistic markers to identify inauthentic reviews. The financial stakes extend beyond reputation to legal liability for facilitating sales of prohibited goods and regulatory scrutiny under frameworks like the European Union’s Digital Services Act.

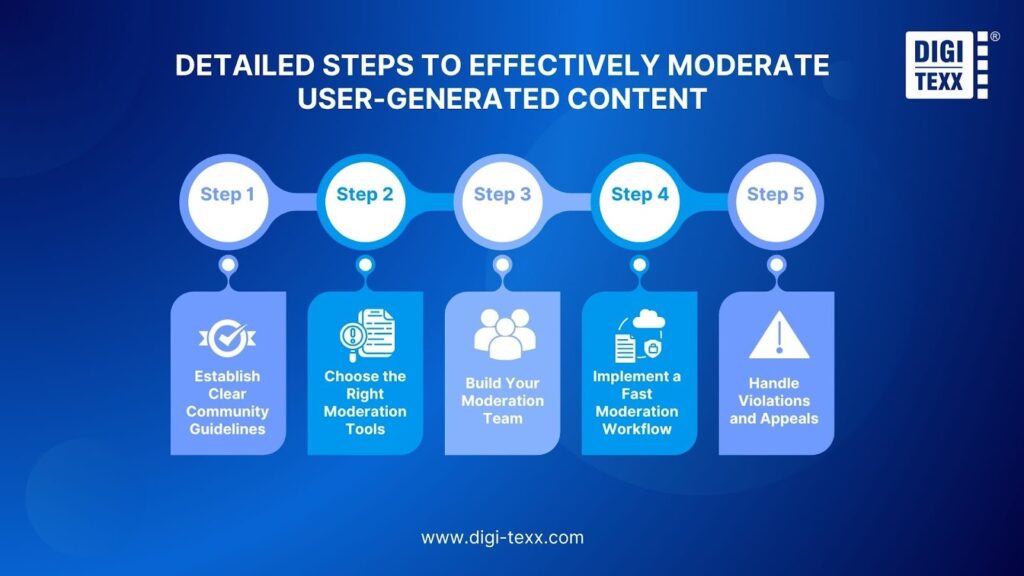

Detailed Steps To Effectively Moderate User-Generated Content

Step 1: Establish Clear Community Guidelines

Foundation for effective moderation invariably begins with comprehensive, clearly articulated community guidelines that serve multiple critical organizational functions.

These policy documents set explicit behavioral expectations, provide moderators with consistent decision-making frameworks, protect platforms legally, and create accountability mechanisms.

What to Include in Your Guidelines

Effective community guidelines must translate abstract organizational values into concrete, actionable behavioral standards. Guidelines should clearly define unacceptable content categories, including hate speech targeting protected characteristics, explicitly sexual content, graphic violence, harassment, illegal activities, spam, and misinformation.

Include specific illustrative examples that demonstrate boundary cases. Rather than merely stating that harassment is prohibited, explain that repeatedly contacting someone after they have explicitly asked you to stop constitutes harassment, while posting someone’s private information without consent represents doxxing.

Guidelines should address both content standards and behavioral conduct expectations. Explain expectations around constructive disagreement methods, prohibitions against impersonation, requirements for accurate profile representation, and rules governing user interactions.

Make explicitly clear that attempting to circumvent moderation systems through evasion techniques will result in escalated consequences.

Transparency about enforcement processes builds essential user trust. Users should understand generally how violations are detected, what review process occurs, what consequences apply to different violation severity levels, and how they can appeal decisions.

This transparency provides sufficient information that users perceive the system as fundamentally fair and accountable.

Examples of Effective Guidelines

The most successful community guidelines share common fundamental characteristics regardless of platform type. Reddit’s guidelines exemplify an effective multi-layered policy structure by combining platform-wide rules establishing baseline safety standards with community-specific guidelines managed by volunteer moderators.

GitHub’s Code of Conduct demonstrates how professional communities can establish clear behavioral expectations focused on fostering welcoming environments while encouraging constructive criticism focused on code quality rather than personal attacks.

Gaming platforms like Twitch have developed sophisticated guidelines addressing unique challenges in live-streaming environments, covering not just what streamers broadcast but also their responsibilities for subscriber behavior.

Education platforms serving children implement particularly stringent guidelines recognizing their vulnerable audiences, with zero-tolerance policies for sexually suggestive content involving minors and educational components teaching digital citizenship.

Step 2: Choose the Right Moderation Tools

Technology selection fundamentally shapes your moderation program’s capabilities, operational efficiency, decision accuracy, and scalability potential.

The modern content moderation landscape offers solutions ranging from comprehensive enterprise-grade platforms to specialized point solutions.

AI-Powered Moderation Tools

Artificial intelligence has revolutionized content moderation capabilities by enabling platforms to analyze enormous content volumes with speed and consistency impossible for human teams operating alone.

Text moderation AI leverages natural language processing to analyze submitted content against trained machine learning models, transcending simple keyword matching to understand context, speaker intent, and sentiment.

Advanced models can detect hate speech even when users deliberately substitute characters with numbers or symbols to evade filters. The most sophisticated platforms, including OpenAI’s Moderation API and Perspective API from Jigsaw, employ transformer-based language models that understand linguistic nuance across multiple dimensions simultaneously.

Image and video moderation relies on computer vision and deep learning models trained on millions of labeled examples. Services like Amazon Rekognition, Google Cloud Vision API, Microsoft Azure Content Moderator, and specialized providers, including Sightengine and Hive, can detect explicit sexual content, violence, weapons, drugs, hate symbols, and numerous other categories.

The latest generation addresses emerging challenges, including synthetic media detection, with specialized models identifying images created by generators like DALL-E and Stable Diffusion.

However, AI moderation carries important inherent limitations. According to Mordor Intelligence, audits of large language and vision models show patterns of over-removal for non-English content and slower remedial action on harmful material in low-resource languages[5].

Cultural context remains profoundly challenging, edge cases often go undetected until models receive retraining, and false positives remain inevitable.

Manual Moderation Platforms

Despite remarkable AI advances, human moderators remain absolutely indispensable for content review requiring nuanced cultural understanding, ethical judgment, and empathy.

Manual moderation platforms provide the interfaces, workflows, and tools that enable human reviewers to efficiently process flagged content while maintaining decision consistency and reviewer well-being.

The most effective manual moderation platforms incorporate queue management systems that organize efficiency, decision accuracy, and scalability potential.

Moderators need clear action options, including approve, remove, edit, escalate, or flag for legal review. Case management features enable the investigation of complex violations requiring examination of multiple content pieces or analysis of patterns across accounts.

Quality assurance capabilities ensure moderation consistency through random sampling of decisions for supervisory review, tracking individual moderator accuracy rates, and providing constructive feedback mechanisms.

Leading platforms include specialized solutions like CleanSpeak, Besedo’s Implio platform, and Arena, though many large platforms build custom internal tools tailored to their specific needs.

Hybrid Approaches

The most effective moderation strategies in 2026 invariably combine artificial intelligence and human intelligence in complementary ways.

According to Data Bridge Market Research, growing adoption of cloud-based moderation solutions offering flexibility and cost-effectiveness drives sustained growth as businesses seek more efficient and scalable content management strategies[3].

Typical hybrid workflows route content through multiple sequential stages. AI systems conduct rapid first-pass analysis of all user-submitted content within milliseconds. Obviously, acceptable content is published immediately, obviously violating content gets automatically removed, and content with moderate AI confidence routes to human reviewers.

This approach maximizes operational efficiency by automating clear-cut cases while ensuring human judgment applies to ambiguous situations.

The routing logic grows increasingly sophisticated through continuous machine learning, tracking which AI decisions human reviewers override and using corrections to improve models. Systems detect behavioral patterns over time, treating accounts with strong positive history more leniently while subjecting new accounts or those with prior violations to stricter review.

Feedback loops enable continuous performance improvement, with human reviewers providing training data, users reporting content providing additional signals, and regular audits identifying systemic biases requiring adjustments.

Step 3: Build Your Moderation Team

Technology alone cannot achieve truly effective content moderation. The human element remains critical for nuanced decision-making, handling edge cases, providing empathy in user interactions, and continuously improving systems based on emerging patterns.

In-House vs. Outsourced Moderators

Organizations face a fundamental strategic choice between building internal moderation teams or outsourcing to specialized business process outsourcing vendors.

In-house teams develop deep familiarity with your specific platform, community culture, and brand values that external reviewers struggle to replicate. They integrate seamlessly with product, legal, and policy teams, enabling rapid coordination.

However, in-house moderation presents significant challenges, including substantial investment in recruitment, training, and management infrastructure.

The emotional toll of reviewing disturbing content requires robust psychological support systems and wellness programs. Organizations must maintain coverage across global time zones and multiple languages, potentially requiring distributed teams or 24/7 shift work.

Outsourced moderation through BPO providers offers established processes, training programs, and quality assurance systems refined across multiple clients. They provide rapid scalability and operate follow-the-sun models with native language speakers, ensuring continuous coverage. However, outsourcing trade-offs include potentially reduced contextual understanding and possible quality control challenges when vendors balance multiple clients.

Many platforms ultimately adopt hybrid staffing models, maintaining small internal teams for policy development, quality oversight, and high-sensitivity content while outsourcing high-volume routine moderation. This balanced approach leverages outsourcing efficiency for scale while preserving internal expertise for judgment-intensive work.

Training Requirements

Comprehensive training is absolutely essential for effective content review, consistent decision-making, and moderator well-being. Initial training programs should span multiple weeks, covering policy training, cultural competency training, tool proficiency training on moderation platforms, and psychological preparation addressing expected emotional impacts and healthy coping strategies.

Continuing education maintains moderator effectiveness as policies evolve and new abuse tactics emerge. Regular calibration sessions bring moderators together to discuss challenging cases and align interpretations.

Policy update training ensures moderators understand changes and their rationale. Specialized training helps moderators develop expertise in specific content areas like synthetic media detection or financial scams.

Step 4: Implement a Fast Moderation Workflow

The operational workflow governing how content flows from submission through review to publication or removal dramatically impacts both user experience quality and platform safety levels. Workflow design must carefully balance competing priorities, including decision speed, judgment accuracy, resource efficiency, and user satisfaction.

Pre-Moderation vs. Post-Moderation

Pre-moderation involves reviewing content comprehensively before it becomes visible to other platform users. When someone submits content, it enters a review queue where moderators or automated systems evaluate it before publication.

This approach provides maximum control, ensuring no policy-violating content ever becomes public. Pre-moderation proves essential for high-risk scenarios, including children’s platforms, legally sensitive environments, or situations where content cannot be unseen once published.

However, pre-moderation creates meaningful user experience challenges. Delayed publication frustrates users expecting instant engagement, real-time conversations become impossible, and high-volume platforms face overwhelming review backlogs with content potentially delayed hours or days.

Post-moderation allows content to be published immediately while moderators review it afterward through reactive or proactive monitoring. This approach maintains real-time interaction patterns that modern users expect while still enabling policy enforcement.

Post-moderation’s primary advantage lies in preserving natural conversation flow and user engagement. However, the critical trade-off involves risk exposure, with harmful content becoming visible to users before removal.

Most sophisticated platforms implement hybrid timing approaches that intelligently apply different strategies to different content types or user segments. Profile information might require pre-moderation, new account content might undergo review for the first several posts, high-risk content categories like images might require review before publication, while text comments post immediately, and content from trusted users with strong histories might bypass pre-moderation entirely.

Escalation Procedures

Complex content decisions require clear escalation paths, routing challenging cases to appropriate decision-makers with requisite expertise while maintaining decision velocity. First-line moderators handle the vast majority of decisions using clear policy guidelines.

However, they should have explicit escalation triggers, including borderline cases lacking confidence, potential legal issues, high-profile accounts, novel abuse patterns, or content requiring external coordination.

Senior moderators serve as the second escalation tier, providing authoritative interpretation for ambiguous cases and maintaining decision consistency across the team. Policy teams receive escalations involving policy interpretation disputes or novel situations requiring new policy development.

Legal counsel receives immediate escalation for content involving potential criminal activity, threats of imminent harm, or child sexual abuse material. Time-based escalation policies prevent cases from stalling indefinitely, with automatic escalation if moderators cannot resolve cases within specified timeframes.

Step 5: Handle Violations and Appeals

How platforms handle policy violations and user appeals significantly impacts both community safety through deterrence and user trust through perceptions of fairness. Effective systems balance consistent enforcement with procedural fairness.

Violation responses should follow graduated enforcement principles, matching consequences to violation severity and user history. First-time minor violations often warrant educational warnings without penalties, recognizing that many violations result from misunderstanding guidelines. Repeated violations or more serious offenses progress to temporary restrictions, including posting restrictions, comment disabling, or temporary account suspensions. Severe or chronic violations result in permanent account termination, applying to egregious violations like threats of violence or persistent harassment campaigns.

Appeals processes provide essential safety valves against moderation errors that inevitably occur in any large-scale system. Users should have clearly communicated mechanisms to challenge decisions, with responses provided within defined timeframes by reviewers who did not make the original decision.

Appeals are evaluated based on whether original decisions correctly applied current policies and whether the relevant context was considered. When users know they are not going to get harassed or spammed, they are more likely to stick around and join the conversation.

Best Practices for Effective UGC Moderation

Consistently successful content moderation operations share common practices maximizing effectiveness while maintaining operational efficiency. Start with clear objectives aligned to specific measurable business goals.

Balance automation and human judgment appropriately based on each approach’s comparative strengths. Use AI systems for initial screening and high-volume processing while reserving human review for ambiguous cases requiring cultural context.

Continuously evolve policies based on observed patterns emerging from operational experience. Monitor systematically what violations occur most frequently and what new abuse tactics emerge. Invest seriously in moderator wellness, recognizing the psychological toll of reviewing disturbing content. Provide access to licensed mental health professionals and implement content rotation, preventing extended exposure to the most disturbing material.

Engage communities actively in moderation through user reporting systems that empower users to flag problematic content.

Furthermore, implementing reputation systems and maintaining transparency helps users understand removal criteria and encourages their contribution to community health.

Common Mistakes to Avoid

Even well-intentioned platforms make predictable errors that undermine moderation effectiveness. Over-reliance on automation without sufficient human oversight leads to systematic errors that AI cannot detect.

Inconsistent policy enforcement frustrates users who observe apparently similar content receiving different treatment. Inadequate transparency about moderation processes breeds distrust and conspiracy theories.

Neglecting emerging abuse patterns allows sophisticated bad actors to exploit policy gaps. Insufficient cultural competency leads to systematic bias against certain communities or regions.

According to industry research, one in four moderators develops moderate-to-severe distress, driving higher turnover and reputational risk[5].

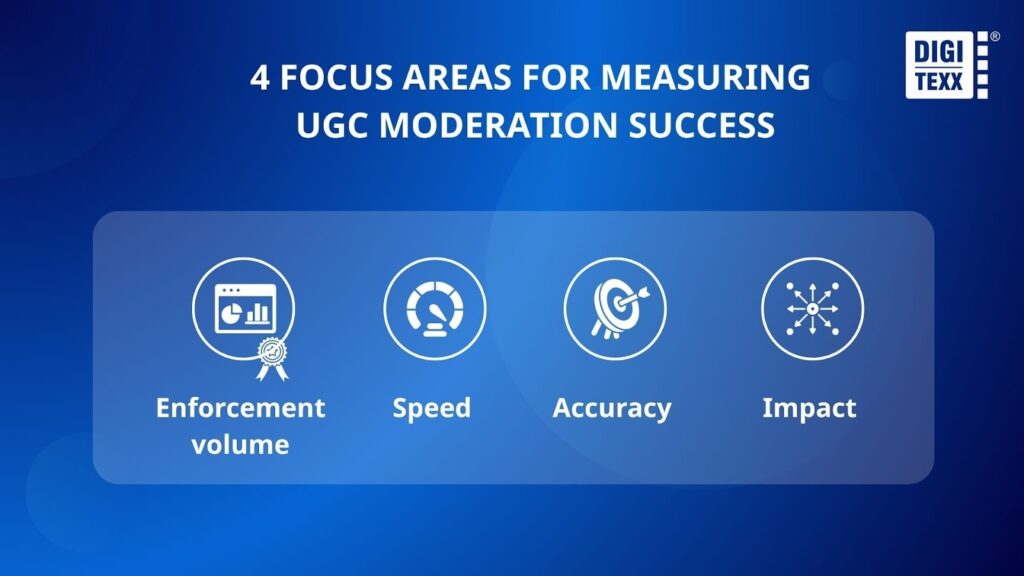

Measuring Moderation Success: Focus Areas

Effective moderation programs require robust measurement systems, providing visibility into performance and identifying improvement opportunities.

Enforcement volume metrics provide basic operational visibility, including total content processed and volume moderated by humans versus automation.

Speed metrics measure how quickly violations are addressed, tracking response time and time to action.

Accuracy metrics assess decision quality through error rate, precision tracking what proportion of actioned content truly violated policies, and recall measuring what proportion of actual violations were detected.

Impact metrics estimate moderation effectiveness through the prevalence rate, which measures the percentage of visible content that violates policies.

Additionally, the violative view rate tracks what percentage of total content views involve material that breaches these guidelines.

Frequently Asked Questions (FAQ)

What is the difference between proactive and reactive moderation?

Proactive moderation involves systematically reviewing content regardless of whether users report it, while reactive moderation responds specifically to user reports. Most platforms combine both approaches, using proactive methods for high-risk content categories while relying on reactive reporting for lower-risk areas.

How much does content moderation cost?

Moderation costs vary enormously based on content volume and quality requirements. Automated moderation through API services might cost fractions of a cent per item, while human moderation ranges from a few cents per item for outsourced review to several dollars per item for specialized in-house expertise. Comprehensive programs can cost millions annually for large platforms.

Can AI completely replace human moderators?

No. While AI dramatically improves efficiency, human judgment remains essential for cultural context, novel situations, appeals review, and edge cases where empathy and common sense outweigh algorithmic classification. Best results usually come from combining both approaches.

What legal requirements apply to content moderation?

Legal requirements vary significantly by jurisdiction. The EU Digital Services Act imposes transparency requirements and response time standards. The UK Online Safety Act requires platforms to prevent illegal content.

Section 230 in the United States provides platforms with liability protections while requiring a response to certain types of illegal content. Platforms operating globally must understand and comply with requirements in all markets they serve.

Beyond 2026: The Future of Trust and Safety

Content moderation continues evolving rapidly as new technologies and regulatory frameworks reshape the landscape. Generative AI presents both challenges with synthetic content flooding platforms and opportunities with AI assisting moderators in generating policy-compliant alternatives and explaining decisions. Regulatory pressure will continue to intensify globally, with more jurisdictions implementing comprehensive online safety legislation.

The fundamental tension between free expression and safety will persist. Success will require ongoing investment in sophisticated technology, expert human judgment, clear policies, and meaningful engagement with communities.

According to the Content Moderation Services Market Report by Research Nester, platform owners should look at ways to reduce the need for content moderation and move to a preemptive approach rather than a prohibitive one[1]. Organizations that treat content moderation as a core capability rather than a necessary evil will build safer, more trusted, more successful platforms.

Reference:

- Akshay Pardeshi (2025). Content Moderation Services Market Size, Trends & Forecast to 2035. [online] Research Nester. Available at: https://www.researchnester.com/reports/content-moderation-services-market/7630.

- Amra and Elma LLC. (2025). USER-GENERATED CONTENT STATISTICS 2025. [online] Available at: https://www.amraandelma.com/user-generated-content-statistics/.

- Data (2024). Global Content Moderation Solution Market Size, Share, and Trends Analysis Report – Industry Overview and Forecast to 2032. [online] Databridgemarketresearch.com. Available at: https://www.databridgemarketresearch.com/reports/global-content-moderation-solutions-market [Accessed 3 Feb. 2026].

- Grandviewresearch.com. (2023). Content Moderation Services Market Size Report, 2030. [online] Available at: https://www.grandviewresearch.com/industry-analysis/content-moderation-services-market-report.

- Mordor Intelligence (2025). Content Moderation Market Size 2030 & Industry Statistics. [online] Mordor Intelligence. Available at: https://www.mordorintelligence.com/industry-reports/content-moderation-market.

- Sudeep Pednekar (2025). Global Content Moderation Solutions Market By Deployment Type (On-Premise, Cloud-based), Application (Media & Entertainment, Retail & E-Commerce, Packaging & Labelling, Healthcare), By Geographic Scope And Forecast. [online] Verified Market Research. Available at: https://www.verifiedmarketresearch.com/product/content-moderation-solutions-market/ [Accessed 3 Feb. 2026].

- Wipro.com. (2019). Challenges & Future of User Generated Content (UGC) – Wipro. [online] Available at: https://www.wipro.com/business-process/content-moderation-a-perspective-on-the-present-and-future-implications/ [Accessed 3 Feb. 2026].

- Expertmarketresearch.com. (n.d.). Global Content Moderation Solutions Market Report and Forecast 2024-2032. [online] Available at: https://www.expertmarketresearch.com/reports/content-moderation-solutions-market.