As global e-commerce enters 2025, we are witnessing a fundamental paradigm shift from competing on price and delivery speed to a battle for “Digital Trust.” We understand that modern search algorithms, particularly Google’s core updates, are placing unprecedented weight on E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness). Consequently, choosing the right AI vs human content moderation solutions has become a decisive factor for brand survival.

The explosion of User-Generated Content (UGC), which ranges from product reviews and unboxing videos to live commerce streams, has created a massive governance challenge. Data from the DHL E-Commerce Trends Report 2025 indicates that 70% of global consumers expect to shop primarily through social media by 2030, bypassing traditional websites entirely[1]. This poses a difficult puzzle: How can platforms maintain safety and reputation when content volume exceeds human processing capacity, while still retaining the nuance and empathy customers demand?

This in-depth report will comprehensively analyze the confrontation and symbiosis between Artificial Intelligence (AI) and humans content moderation solutions. Based on the latest 2025 market data, international standards such as ISO/IEC 25389, and case studies from Etsy, Amazon, and Pinterest, we will reshape content governance strategies to optimize ROI and protect brand integrity.

AI Content Moderation: Unmatched Capabilities and Advantages

The year 2025 marks the maturity of Multimodal Large Language Models (MLLMs). When evaluating AI vs human content moderation solutions, the speed and scalability of AI remain its most formidable weapons.

Speed and Scalability at Enterprise Level

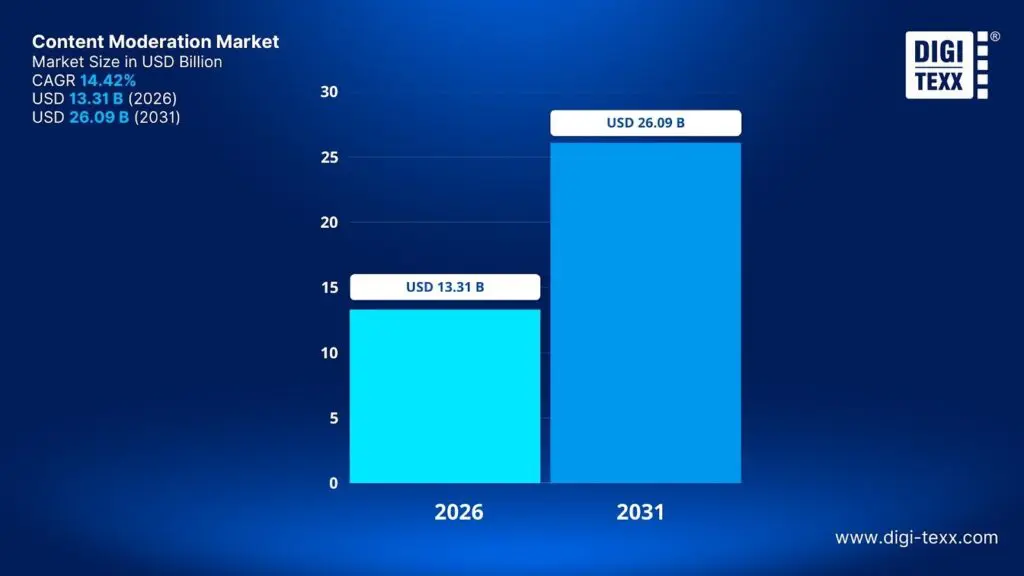

The exponential growth of online content makes manual moderation a mathematically impossible task for large platforms. Reports show that by 2026, the global content moderation market is expected to reach 11.31 billion USD, with a Compound Annual Growth Rate (CAGR) of 14.42%[2]. This reflects the urgent need for the automated side of AI vs human content moderation solutions.

Major e-commerce platforms like Mercari or Etsy must handle billions of listings. Mercari, with over 2 billion listed items and 20 million monthly active users, was forced to develop an “Auto Content Moderation” system based on Machine Learning to proactively detect violations before they appear on the user interface[3].

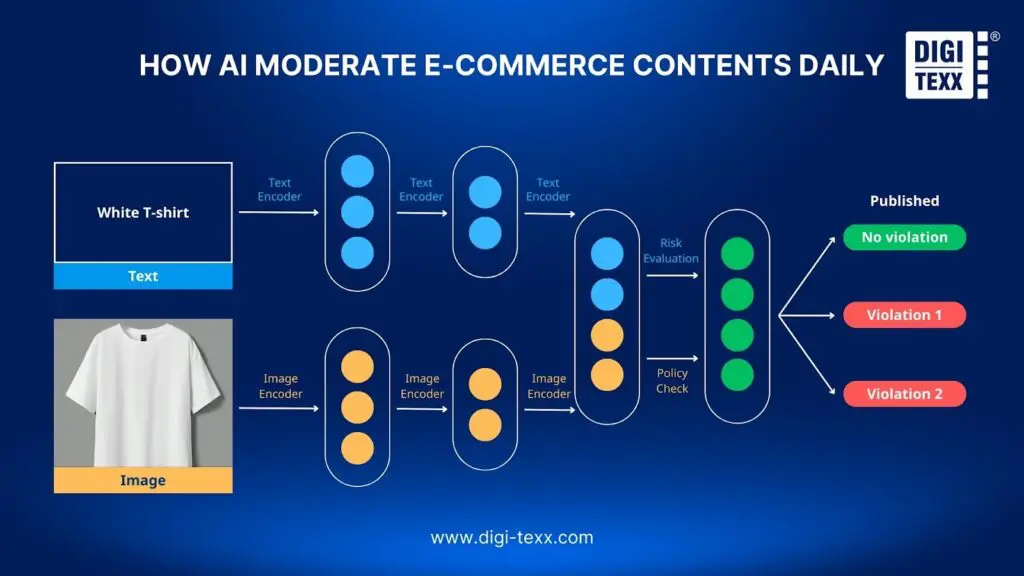

AI’s scalability is demonstrated through parallel processing. An AI system can ingest, analyze, and decide on thousands of images and product descriptions within milliseconds. Pinterest has built a “real-time radar” using AI to score billions of Pins daily, extracting rich signals and metadata to detect policy-violating content before it is even reported by users. In an environment where time-to-market directly impacts Gross Merchandise Volume (GMV), the delay of manual moderation is an unacceptable bottleneck.

24/7 Automated Processing and Real-time Detection

One of AI vs human content moderation solutions is its ability to operate continuously, unconstrained by shifts, time zones, or biological fatigue. This is particularly vital for the “Social Commerce” and “Live Shopping” trends dominating 2025.

In livestream shopping sessions, content is created and consumed simultaneously. Hate speech, pornographic imagery, or fraudulent behavior appearing on a livestream must be blocked immediately, not 15 minutes later. Modern AI models like Gemini 2.0 Flash or GPT-4o have proven their multimodal processing capabilities (image, audio, and text) with extremely low latency. 2025 benchmark studies show AI can achieve very high recall rates for clear risk categories such as Drugs, Alcohol, and Tobacco (DAT) or violent imagery, ensuring brand safety in real-time.

AI’s 24/7 presence also prevents bad actors from exploiting off-peak hours, such as 3 AM local time, to post counterfeit products or spam content. This is a common tactic used to bypass human moderation teams working standard office hours.

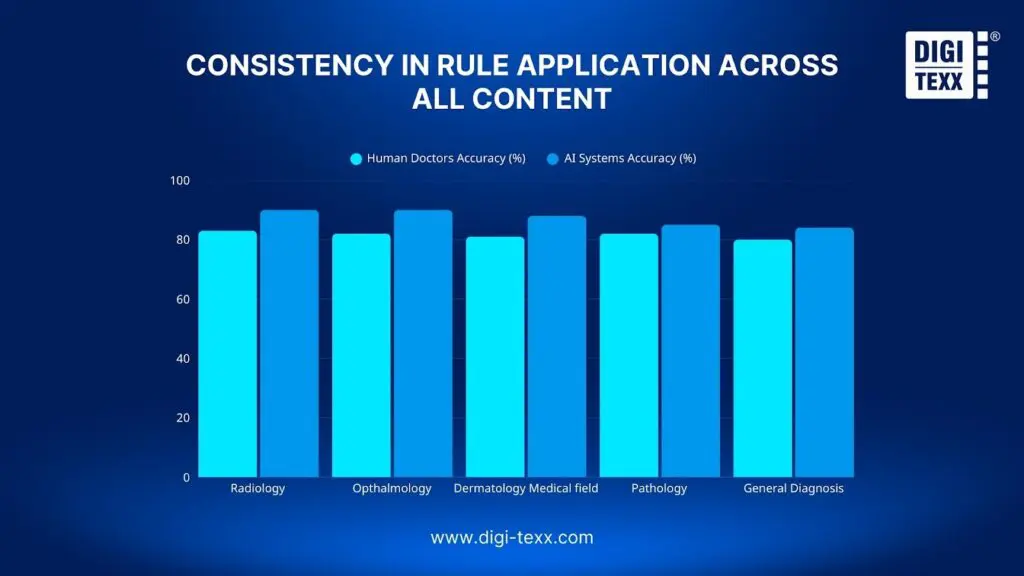

Consistency in Rule Application Across All Content

Humans, no matter how well-trained, are subject to cognitive bias, emotions, and fatigue. A moderator might evaluate an image differently at the end of a shift compared to the beginning. Conversely, AI provides deterministic consistency.

When a machine learning model is trained to recognize a specific violation, such as a counterfeit Gucci logo or a phishing email template, it applies that rule uniformly across millions of cases. This consistency is critical for Seller Trust. On e-commerce marketplaces, uneven policy enforcement, where one seller is penalized while a competitor with a similar violation is overlooked, is a leading cause of complaints and platform churn.

Furthermore, advanced AI systems at Etsy use techniques like “control variates” to minimize variance and ensure that moderation decisions are not only fast but stable over time, helping policy managers easily forecast the impact of new rules.

Cost-Effectiveness and ROI

From a budget perspective, the economic disparity in AI vs human content moderation solutions is staggering. Manual moderation is approximately 40 times more expensive than MLLM solutions[4]. For platforms processing millions of reviews, OPEX savings can reach tens of millions of dollars annually while getting goods to buyers faster.

To illustrate further, the table below compares estimated costs for brand safety classification per content unit:

Table 1: Comparison of Moderation Cost and Performance (2025 Data)[4]

| Method | Estimated Cost (USD/unit) | F1-Score (Overall Accuracy) | Relative Cost Factor |

| Human (Expert) | $10.00 | 0.95 – 0.98 | 1.0x |

| GPT-4o | $0.83 | 0.84 | ~0.08x |

| Gemini-2.0-Flash | $0.34 | 0.88 | ~0.03x |

| GPT-4o-mini | $0.05 | 0.80 | ~0.005x |

Data from the table shows that using compact models like GPT-4o-mini can reduce costs to just 5 cents for a workload that would cost a human 10 USD to perform. For platforms processing millions of reviews or images daily, OPEX (Operating Expense) savings can reach tens of millions of dollars annually. Simultaneously, ROI is indirectly boosted by reducing product approval wait times, getting goods to buyers faster[5].

Improvement via Machine Learning over Time (Learning Loops)

Unlike human personnel who require continuous retraining during turnover, AI systems benefit from knowledge accumulation. Through Active Learning mechanisms and Human-in-the-Loop, AI models become progressively smarter.

Etsy is a prime example of this process. They utilize a “Champion vs. Challenger” model. A new model (Challenger) is run in parallel or on a small slice of traffic. Only when it proves better Precision and Recall than the current model (Champion) based on human-labeled data is it officially deployed. Every time a human corrects an AI error, such as flagging a handmade product wrongly as counterfeit, that data is fed back into the training set, helping the AI avoid repeating that mistake in the future.

Reducing Mental Health Impact on Moderator Teams

From an ethical and HR management standpoint, AI acts as a “psychological firewall.” Content moderation often involves exposure to toxic imagery, including child abuse (CSAM), violence, and hate speech. Medical studies from 2024 to 2025 confirm that moderators are at high risk for Post-Traumatic Stress Disorder (PTSD) and vicarious trauma.

By using AI as the first line of defense, platforms can automatically block and delete the most obviously toxic content, such as hash matching against known CSAM databases, without human eyes ever seeing it. For “gray area” content requiring human review, AI technology can assist by blurring images, muting audio, or converting images into descriptive text, minimizing the visual impact on the reviewer. This is a vital standard in protecting worker well-being, as recommended by the Trust & Safety Professional Association (TSPA).

Human Content Moderation: Vital, Irreplaceable Advantages

While AI excels in speed and cost, humans still hold the “gold standard” for accuracy, especially in complex situations requiring deep understanding. In brand safety tests, humans still achieve F1-scores up to 0.98, far surpassing the best current AI models, which range from approximately 0.88 to 0.91. Here is why the human element remains the core of the AI vs human content moderation solutions framework[4].

Nuance and Contextual Understanding

AI, even the most advanced LLMs, often struggles with “contextual blindness.” Machines can detect a keyword or an object but often fail to understand why it is there. For example, AI might flag a medical educational video about breast cancer as “nudity,” or a news report about a protest as “promoting violence.” Humans have an innate ability to distinguish between a violation and a discussion about a violation.

The biggest weakness of AI lies in detecting sarcasm and slang. A product review stating, “Great, this vase arrived in a million pieces after a 3-week wait,” might be classified as “Positive” by Sentiment Analysis tools due to the keyword “Great,” while a human reader immediately recognizes the bitter irony. This nuance is why businesses cannot fully abandon the human side of AI vs human content moderation solutions. In e-commerce, misinterpreting these reviews not only skews product rating data but also leads algorithms to recommend poor-quality products to other users. Furthermore, internet language changes daily. Slang terms like “sick,” “bomb,” or “slay” can be positive or negative depending entirely on the subculture context, which is something models trained on static data find hard to catch.

Cultural Awareness and Localized Decision Making

E-commerce in 2025 is a global playground, and content moderation must navigate a minefield of cultural norms. What is considered acceptable swimwear advertising in Brazil might be viewed as indecent behavior in some Middle Eastern countries. AI models, which are often trained primarily on Western data and English, frequently exhibit cultural bias.

Research shows AI performs poorly for low-resource languages and specific dialects. For instance, automated tools have failed to distinguish between hate speech and “reclaimed language” within minority communities, or mistakenly flagged traditional cultural symbols as offensive due to a lack of diverse training data. A classic example of AI’s failure in a cultural context is when top AI models incorrectly flagged a Japanese video discussing caffeine addiction as “drug abuse,” whereas a human easily identified it as health-related content. Native moderators provide “cultural intelligence” helping platforms avoid brand-damaging blunders that automated AI vs human content moderation solutions might miss.

Empathy and Fairness in Complex Cases

Trust & Safety is not just about deleting bad content; it is about fairness. When a seller appeals an account lockout or a buyer disputes a refund, the decision requires weighing conflicting evidence and applying a “reasonable person standard.” AI completely lacks empathy and equitable discretion.

In cases of harassment or bullying, the harm is often subjective and cumulative. A single comment might be harmless, but as part of an organized harassment campaign, it becomes toxic. Humans are far superior at connecting these disparate data points to understand the emotional weight of interactions. Furthermore, legal regulations like the EU’s Digital Services Act (DSA) require a right to explanation and transparent appeal processes. Humans are essential for drafting thoughtful responses that explain why an enforcement action was taken, helping maintain trust even when a user is penalized.

Adaptability to New Trends and Threats

Scammers, counterfeiters, and online trolls always evolve faster than an AI model’s training cycle. “Zero-day” attacks in content moderation occur when bad actors find new ways to bypass filters, such as using “algospeak” (using “unalive” instead of “kill,” or “le dollar bean” for “lesbian”) or sophisticated logo-masking techniques in product images.

While AI models need time to collect new data, label it, and undergo fine-tuning, which is a process that can take weeks, human teams can adapt almost instantly. When a new fraud trend is identified, policy managers can issue a “BOLO” (Be On the Lookout) notice to staff, who can begin enforcing the new standard within the same shift. This flexibility is the final safeguard against viral fraud waves before they cause massive damage.

Building Community Trust through Personal Interaction

In an era saturated with “AI slop” (garbage AI-generated content) and automated interactions, the “human touch” becomes a premium competitive advantage. A Salesforce study indicates that 80% of consumers believe it is crucial for humans to validate AI outputs.

When a user reports a serious issue, such as a safety-compromising product or a severe harassment incident, receiving a soulless automated response can exacerbate frustration and erode trust. Knowing that a real human has reviewed their case validates the user’s concerns and proves the platform truly values their safety. This is particularly important for building long-term brand loyalty.

Why the Hybrid Model is the Winning Strategy

The consensus among industry experts, including leaders from Zefr, Pinterest, Etsy, and TSPA, is clear: You cannot rely solely on AI or solely on humans. The winning strategy for 2025 is the Hybrid Moderation Model, often operated as a “Human-in-the-Loop” (HITL) workflow. This model leverages the strengths of both AI vs human content moderation solutions while mitigating their weaknesses.

Best of Both Worlds: Speed + Judgment

The hybrid model acts like an intelligent filtering funnel. AI serves as the filter at the mouth of the funnel, processing high volumes at high speed and handling the majority of clear-cut decisions. Humans operate at the neck of the funnel, focusing cognitive resources on edge cases.

Confidence Threshold Architecture:

The core mechanism of this system is the Confidence Score:

- High Confidence (Auto-Action): If the AI model predicts a violation with >95% confidence, such as blatant spam or known CSAM hashes, the system automatically deletes the content immediately[6].

- High Confidence (Auto-Approve): If the model predicts safety with >95% confidence, the content is published immediately, ensuring a smooth user experience[6].

- The Gray Area (Human Review): If the model’s confidence falls in the middle (for example, 60-90%), or if the content triggers specific high-risk flags, it is routed to a human moderator’s queue[6].

This architecture ensures that human effort is not wasted on simple tasks that AI can handle more cheaply, but is reserved for nuanced decisions where human accuracy is worth the higher cost.

Industry Standard and Market Evidence

The hybrid model has become the de facto standard for leading platforms. They don’t just pick one; they orchestrate AI vs human content moderation solutions to work in tandem, treating every human decision as a learning signal to upgrade AI accuracy:

- Etsy: Uses a supervised learning system where human annotations create the “ground truth” for training.

- Pinterest: Deploys a “real-time radar” to report violation prevalence, combining machine learning with human sampling to verify accuracy.

- Amazon: Utilizes the “Amazon Augmented AI” (A2I) workflow, clearly defining the handoff between computer vision and human review teams based on confidence thresholds.

- Zefr: Research concluded that a hybrid human-AI approach is the most effective and economical path to ensure brand safety in complex environments.

Cost Efficiency and Sustainability

The hybrid approach optimizes the Total Cost of Ownership (TCO) for Trust & Safety operations. Relying only on humans is financially unsustainable, while relying only on AI creates technical debt in the form of false positives and false negatives.

By automating 80-90% of the workload, platforms significantly reduce staffing needs. However, by keeping humans in the loop for the remaining 10-20%, they protect the platform’s reputation and provide training data. This creates a sustainable loop where human decisions become training data, allowing AI to handle more volume over time.

Choosing the Right Moderation Method for Your Business

Implementing a strategy is not “one-size-fits-all.” For startups, relying heavily on off-the-shelf AI tools is efficient. However, for large enterprises, investing in fine-tuned AI vs human content moderation solutions with a dedicated in-house human team is essential to protect GMV and reduce false positives.

Start with Hybrid if Expanding Globally

For businesses planning to expand into international markets in 2025, starting with a hybrid infrastructure is mandatory.

- Localization: A hybrid strategy allows you to deploy AI for broad signals while hiring native human experts to moderate text and context.

- Regulatory Compliance: Global expansion means exposure to diverse regulations such as GDPR or DSA. Many of these mandate human oversight. A hybrid model with documented human workflows aligns with new international standards like ISO/IEC 25389:2025.

Budget Considerations and Resource Allocation

For Startups and SMEs:

- Strategy: Rely heavily on off-the-shelf AI tools like AWS Rekognition or OpenAI APIs.

- Resource Allocation: Hire a small “Policy and Safety” group to manage AI thresholds and handle escalations. Use third-party BPO providers to scale human review as needed.

- Cost Perspective: Using smaller, optimized models can reduce AI costs to negligible levels (0.05 USD per unit).

For Large Enterprises & Marketplaces:

- Strategy: Invest in fine-tuned models trained on the company’s own proprietary data.

- Resource Allocation: Build a strong in-house Trust & Safety engineering team to manage MLOps and maintain a dedicated in-house human operations team for high-risk policy areas.

- ROI Focus: Focus on reducing “False Positives,” as mistakenly blocking a legitimate seller directly hurts GMV.

Conclusion

In 2025, the debate between “AI or Human” moderation has been settled: the answer is Integration.

The leaps in Multimodal Large Language Models (MLLMs) have completely changed the economics of content moderation, providing near-human accuracy for specific tasks at 1/40th the cost. These tools provide the speed and scalability necessary to manage the deluge of UGC. However, the final mile of trust, which is the ability to understand a sarcastic review or a complex cultural misunderstanding, remains the exclusive domain of human experts.

For e-commerce leaders, the strategic imperative is to design Resilient Hybrid Systems. These systems must use AI to clear the noise and use humans to refine the signal. By adopting standardized frameworks and balancing AI vs human content moderation solutions, businesses can build a Trust & Safety operation that serves as a true competitive advantage.

Key Takeaways for 2025 Strategy:

- Automate the Obvious: Use cost-effective AI to handle >90% of content, especially objective violations.

- Humanize the Complex: Reserve human budget for nuance, appeals, and high-risk gray areas.

- Iterate via HITL: Treat every human decision as a learning signal to continuously upgrade AI accuracy.

- Prioritize Wellness: Use AI to shield human teams from traumatic content, treating moderator mental health as a critical operational metric.

Frequently Asked Questions:

1. Is AI accurate enough to replace human moderators entirely?

Not yet. While AI models like Gemini-2.0-Flash have reached an F1 score (accuracy) of roughly 0.88 to 0.91, human experts still maintain a “gold standard” of 0.98, particularly in brand safety tasks.

2. How prevalent are AI-generated fake reviews in 2025?

The threat is growing rapidly. For instance, in the real estate sector, AI-generated agent reviews on Zillow surged to 23.7% in 2025, a massive 558% increase since 2019. In sectors like Canadian Insurance, over 21% of reviews are now suspected to be AI-generated.

3. What is the ISO/IEC 25389:2025 standard mentioned?

Published in 2025, ISO/IEC 25389 is the first international standard dedicated to “Trust and Safety.” It provides a formal framework for organizations to manage content risks and ensure digital product safety, acting as a benchmark for compliance.

4. How does the “Hybrid Model” actually save money?

It uses a “confidence threshold” strategy. AI handles the easiest 90% of cases (costing ~$0.05/unit), while humans only review the hardest 10% (costing ~$10.00/unit). This creates a blended cost that is significantly lower than an all-human team while maintaining high accuracy.

You Might Also Like

- What Is Data Governance? Key Elements & Benefits

- Construction Invoice Reconciliation: Best Practices, Software & Outsourcing

- Top 10 Outsourced AI Training Data Companies in 2026

Sources:

- DHL Group. (2025). Jun 04, 2025: DHL’s E-Commerce Trends Report 2025: AI and social media reshaping online shopping. [online] Available at: https://group.dhl.com/en/media-relations/press-releases/2025/dhl-e-commerce-trends-report-2025.html.

- Eesel.ai. (2025). A practical guide to setting confidence thresholds for AI responses. [online] Available at: https://www.eesel.ai/blog/setting-confidence-thresholds-for-ai-responses [Accessed 20 Jan. 2026].

- Lutz-Guevara, R. (2024). AI Can’t Detect Sarcasm: Why Content Moderation Needs a Human Touch – RTInsights. [online] RTInsights. Available at: https://www.rtinsights.com/ai-cant-detect-sarcasm-why-content-moderation-needs-a-human-touch/.

- Mercari AI. (2022). Auto Content Moderation for Trust & Safety. [online] Available at: https://ai.mercari.com/en/projects/auto-content-moderation-for-trust-safety/ [Accessed 20 Jan. 2026].

- Mordor Intelligence (2025). Content Moderation Market Size 2030 & Industry Statistics. [online] Mordor Intelligence. Available at: https://www.mordorintelligence.com/industry-reports/content-moderation-market.

- Zefr. (2025). Humans make better content cops than AI, but cost 40x more |…. [online] Available at: https://zefr.com/press/humans-make-better-content-cops-than-ai-but-cost-40x-more [Accessed 20 Jan. 2026].