AI-powered data annotation technologies efficiency accuracy are becoming essential as AI teams manage increasingly large and complex training datasets. Manual labeling alone often struggles with speed, cost, and consistency. To address this challenge, many organizations adopt AI-assisted labeling and human-in-the-loop workflows to scale annotation while maintaining data quality. In this article, DIGI-TEXX explains how AI-assisted annotation works and how human-in-the-loop workflows help ensure reliable machine learning datasets.

>>> See more:

- Data Labeling Service: Benefits, Top Providers & How to Choose

- Invoice Reconciliation Process Steps | DIGI-TEXX

- 15 Best Data Labeling Service Providers In 2026

What Are AI-Powered Data Annotation Technologies?

AI-powered data annotation technologies refer to systems that leverage machine learning to support the labeling of training data more efficiently and consistently than purely manual processes. These technologies integrate automated tools with human oversight to produce reliable datasets for AI development.

AI-assisted annotation platforms can handle tasks such as tagging, classification, segmentation, and transcription across multiple data formats, including images, text, audio, and video.

How AI-Powered Data Annotation Technologies Differ From Manual Labeling?

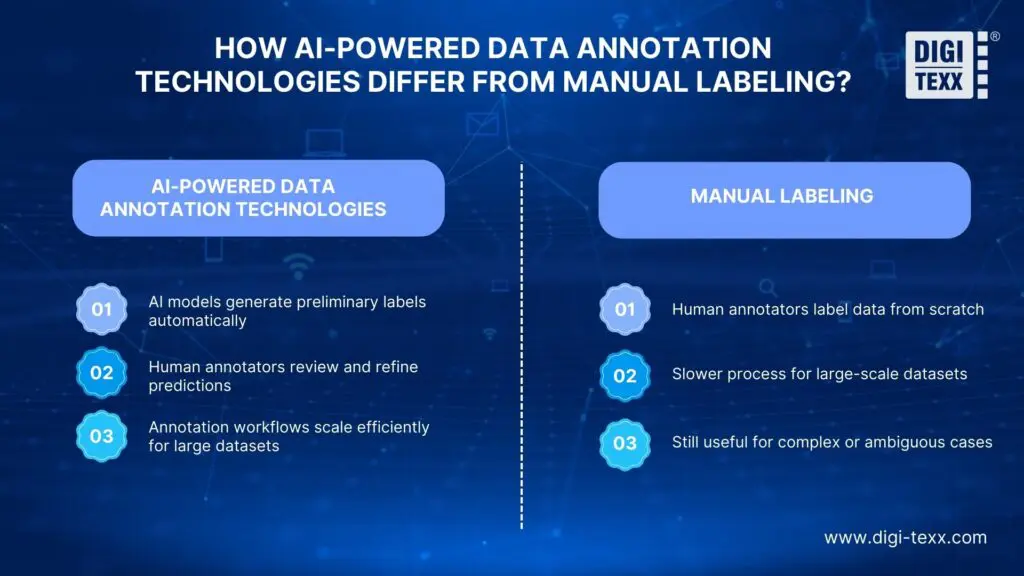

AI-powered data annotation technologies differ from manual labeling in how the process is performed. In AI-assisted workflows, the system suggests labels based on learned patterns, while human annotators review, verify, and refine them. This collaboration between automation and human expertise helps accelerate the process while maintaining data quality.

In contrast, manual labeling relies on human annotators to complete every step of the process from the beginning. As datasets grow larger, this approach becomes slower and difficult to scale.

Several key differences highlight how AI-powered annotation improves the labeling process:

- AI models can automatically generate preliminary labels for repetitive patterns

- Human annotators focus on validation, edge cases, and complex scenarios

- Annotation workflows scale more efficiently when processing large datasets

- Model-driven rules help improve labeling consistency across datasets

Although manual labeling takes more time, it still plays a role when handling complex data or new tasks that require human judgment.

>>> See more:

- Insurance Back Office Support Services for Insurers & Agencies

- Data Validation & Verification: Differences With Clear Examples

- Healthcare Back-Office Support Services For Efficient Operations

The Importance Of Data Annotation In Machine Learning

Data annotation plays a crucial role in machine learning because supervised learning models rely on labeled data to learn patterns and make accurate predictions. High-quality annotated datasets help AI models understand objects, relationships, and key features within the data.

Well-annotated data provides several important benefits for machine learning development, including:

- Ground truth data used to train machine learning models.

- Reference datasets for testing and evaluating model performance.

- Structured inputs that help algorithms process data more effectively.

- Clear definitions of the objects, categories, or patterns a model should recognize.

As a result, accurate and consistent annotations help improve model learning and contribute to better performance in AI systems.

Common Types Of Data Annotated In AI Projects

AI projects often require annotation for different types of data depending on the model and application. The most commonly annotated data types include:

- Image data: used for tasks such as object detection and image segmentation.

- Video data: applied in tasks such as object tracking and activity recognition.

- Text data: commonly annotated for tasks like classification, sentiment analysis, or entity recognition.

- Audio data: used for speech transcription, speaker identification, or voice recognition systems.

- Sensor data: including data from technologies such as LiDAR or radar, often used in autonomous systems.

>>> See more:

- Document Indexing Services For Efficient Search & Data Access

- Ecommerce Back Office Support Services By DIGI-TEXX

- Outsourced Order Processing Services – Fast & Accurate

How AI-Powered Data Annotation Technologies Improve Efficiency And Accuracy?

AI-powered data annotation technologies improve efficiency and accuracy by combining automated labeling with human validation. Through AI-assisted pre-labeling, human-in-the-loop review, and continuous quality checks, annotation workflows can scale while maintaining reliable training data.

AI-Assisted Pre-Labeling And Model Suggestions

AI-assisted pre-labeling allows models to generate initial annotations before human review. The system predicts labels such as bounding boxes, tags, or categories, giving annotators a starting point instead of labeling from scratch. Annotators review the suggestions, correct errors, and finalize the labels. This approach significantly speeds up annotation, especially for large datasets with repeated patterns.

Human-In-The-Loop Annotation Workflows

Human-in-the-loop (HITL) workflows ensure humans remain responsible for final label quality while AI assists with repetitive tasks. AI typically handles high-confidence predictions, while annotators review uncertain cases and correct errors.

Quality Control And Continuous Feedback Loops

Quality control ensures annotation accuracy across datasets and teams. Many annotation pipelines use feedback loops where corrected labels help improve models. Common methods include random audits, agreement checks between annotators, automated anomaly detection, and model retraining.

Who Uses AI-Powered Data Annotation Technologies To Improve Efficiency & Accuracy?

AI-powered data annotation technologies are widely used by organizations developing or deploying machine learning systems. These platforms support multiple roles involved in preparing, managing, and validating training data.

Data Scientists & Machine Learning Engineers

Data scientists and ML engineers use annotation systems to create training datasets and improve model performance. They focus on label quality, dataset coverage, and model feedback. Typical tasks include:

- Designing label schemas.

- Monitoring dataset drift.

- Evaluating model predictions against labeled data.

- Integrating labels into retraining pipelines.

Annotation is therefore an important part of the ML lifecycle.

Annotation Teams & Labeling Vendors

Annotation teams and labeling vendors are responsible for executing large-scale data labeling tasks. These teams often operate within specialized workflows designed to process large volumes of data while maintaining strict quality standards. They typically handle:

- High-volume data labeling.

- Quality assurance and validation.

- Domain-specific annotation such as medical or autonomous driving data.

Enterprises Building AI Products At Scale

Enterprises developing AI-driven products use annotation platforms to support production-level machine learning systems. Typical requirements include:

- Compliance-ready audit trails.

- Secure handling of sensitive data.

- Integration with internal ML infrastructure.

- Long-term dataset management.

>>> See more:

- Intelligent Document Processing Services: How It Works & Business Benefits

- Professional E-commerce Data Entry Services by DIGI-TEXX

- Back Office Support Services For Streamlined & Efficient Operations

Benefits Of AI-Powered Data Annotation Technologies For Different Industries

AI-powered annotation technologies help organizations create high-quality training data faster and at larger scale. While the core goal is improving dataset quality, the practical benefits vary across industries depending on their data types and operational needs.

Autonomous Vehicles And Computer Vision Applications

Autonomous driving and computer vision systems depend on highly detailed visual annotations. AI-assisted labeling helps process large volumes of image and video data while maintaining accuracy. Common applications include:

- Pedestrian and vehicle detection.

- Lane and road boundary segmentation.

- Annotation of rare or unusual driving events.

Healthcare Imaging And Medical AI Models

Medical AI relies on accurately labeled clinical data. AI-powered annotation tools assist experts by speeding up repetitive labeling tasks while maintaining strict quality standards. Typical use cases include:

- Tumor detection in medical images.

- Segmentation of pathology or radiology scans.

- Data preparation for clinical decision support systems.

NLP Applications Such As Chatbots And Search Engines

Natural language processing systems require labeled text data to understand language patterns. AI-assisted annotation helps manage large text datasets more efficiently. Common annotation tasks include:

- Intent classification for chatbots.

- Named entity recognition in documents.

- Sentiment or toxicity labeling.

Retail, Security, And Smart Surveillance Systems

Retail analytics and security systems often rely on visual data analysis. AI-powered annotation helps build datasets that support monitoring, detection, and behavioral analysis. Examples include:

- Product recognition in retail environments.

- Detection of suspicious or fraudulent activities.

- Threat identification in surveillance footage.

Best Practices For Ensuring High-Quality AI Data Annotation

To create high-quality annotated datasets, organizations need clear standards, quality control processes, and well-structured workflows to ensure consistent results. Implementing standardized annotation practices helps improve data reliability and enhances machine learning model performance.

Develop Clear Annotation Guidelines And Data Taxonomies

Clear annotation guidelines help annotators apply labels consistently across large datasets. Well-structured taxonomies also define how categories, labels, and relationships should be interpreted during the annotation process. Clear guidelines usually include:

- Detailed label definitions with examples.

- Instructions for handling ambiguous or edge cases.

- Hierarchical label structures and exclusions.

- Periodic updates as datasets and models evolve.

Use Human Review To Handle Complex Or Edge Cases

Human review is essential when handling rare or complex data samples. While AI-assisted annotation can automate repetitive tasks, human judgment is still necessary for ambiguous or context-heavy data.

- Escalation to domain specialists for difficult samples.

- Secondary reviews for sensitive or high-risk labels.

- Benchmark datasets used to measure annotation accuracy.

Maintain Balanced And Unbiased Training Datasets

Balanced datasets help machine learning models learn representative patterns instead of biased ones. Without proper balance, models may perform poorly in real-world situations.

- Sampling data from diverse environments and populations.

- Regular bias checks across label categories.

- Documentation of dataset limitations and potential risks.

>>> See more:

- Top 10 Data Processing Software For Business 2026 – Best Tool Reviewed

- Top 10 Best Data Entry Outsourcing Companies in USA 2026

- Best Real Estate Image Processing Services 2026: Top 15 Providers

Challenges & Risks In AI-Powered Data Annotation

Despite the advantages of AI-assisted labeling, annotation workflows can still face several challenges. Issues related to automated labels, data quality, and inconsistent annotation practices may affect the reliability of training datasets.

Relying Too Much On Automated Labels

Over-reliance on automated labels can introduce systematic errors into annotated datasets. AI models may misinterpret unfamiliar patterns or overlook rare classes, which results in incorrect annotations. Without sufficient human validation, these mistakes can spread across large datasets and negatively affect model training.

Poor Ground Truth Data Leading To Model Drift

Incorrect or outdated ground truth data can significantly impact machine learning performance. When training datasets contain inaccurate labels or inconsistent definitions, models may learn misleading patterns. Over time, these issues can contribute to model drift, where the model’s predictions no longer reflect real-world data.

Inconsistent Labeling Practices Across Teams

Inconsistent labeling practices often occur when multiple annotation teams interpret guidelines differently. Variations in how labels are applied can reduce dataset reliability and create difficulties when evaluating model performance. Such inconsistencies may also introduce hidden noise into training data.

FAQs About Ai-Powered Data Annotation Technologies Efficiency Accuracy

Why Is Maintaining Data Accuracy Important In AI Annotations?

Maintaining data accuracy in AI annotations is essential because machine learning models rely on labeled data to learn patterns and make predictions. If annotations contain errors or inconsistencies, the model may learn incorrect information and produce unreliable results.

Is AI Technology Always 100% Reliable?

No, AI technology is not always 100% reliable. Its performance depends largely on the quality of the training data. If the data contains bias, missing information, or inconsistencies, the model may produce inaccurate predictions. Proper data preparation, annotation quality control, and regular evaluation help improve AI reliability.

DIGI-TEXX provides practical insights into scalable data annotation and AI training data preparation, helping organizations understand how structured annotation workflows, strong quality control processes, and human-in-the-loop approaches can support more accurate machine learning systems.

With experience in large-scale data annotation and data processing operations, DIGI-TEXX works alongside enterprises developing AI solutions to improve dataset quality, enhance model performance, and support the long-term development of reliable AI applications.

>>> See more:

- Top 10 Outsourced AI Training Data Companies in 2026

- Construction Invoice Reconciliation: Best Practices, Software & Outsourcing

- What Is Business Process Outsourcing (BPO)? Definition & Benefits

DIGI-TEXX Contact Information:

🌐 Website: https://digi-texx.com/

📞 Hotline: +84 28 3715 5325

✉️ Email: [email protected]

🏢 Address:

- Headquarters: Anna Building, QTSC, Trung My Tay Ward

- Office 1: German House, 33 Le Duan, Saigon Ward

- Office 2: DIGI-TEXX Building, 477-479 An Duong Vuong, Binh Phu Ward

- Office 3: Innovation Solution Center, ISC Hau Giang, 198 19 Thang 8 street, Vi Tan Ward

References:

- National Institute of Standards and Technology. (2023). Artificial intelligence risk management framework (AI RMF 1.0).https://www.nist.gov/itl/ai-risk-management-framework

- Stanford University. (2024). AI index report 2024. Stanford Institute for Human Centered Artificial Intelligence.https://aiindex.stanford.edu/report/