In today’s digital economy, businesses rely on data to make informed decisions and improve operational efficiency. However, raw information only becomes valuable when structured and analyzed effectively. Data processing transforms scattered data into accurate, actionable insights that reduce costs, improve accuracy, and strengthen strategic planning. In this article, DIGI-TEXX explores what data processing is, why it matters, and how it delivers measurable business value. In this article, DIGI-TEXX helps you understand what data processing is, why it matters, and how it creates measurable value for your business.

>>> See more:

- Top 10 Data Cleansing Companies for Businesses in 2026

- What are the 6 steps of the data analysis process?

- Top 10 Data Entry Outsourcing Companies to Hire in 2026

- How to Automate Documentation in 2026: Step By Step Guide

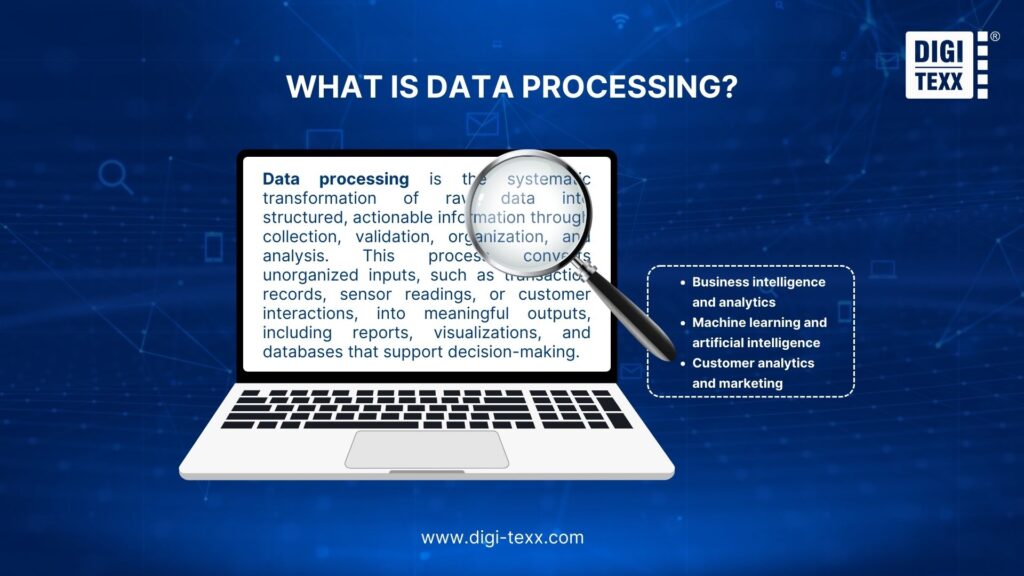

What Is Data Processing?

Data processing is the systematic transformation of raw data into structured, actionable information through collection, validation, organization, and analysis. This process converts unorganized inputs, such as transaction records, sensor readings, or customer interactions, into meaningful outputs, including reports, visualizations, and databases that support decision-making.

Modern data processing operates through automated computer systems executing millions of operations per second. Organizations process data to extract insights, identify patterns, and generate intelligence that drives business strategy.

Data processing serves critical functions across:

- Business intelligence and analytics: Transforming operational metrics into strategic insights

- Machine learning and artificial intelligence: Preparing training datasets for predictive algorithms

- Customer analytics and marketing: Converting interaction data into behavior patterns

- Scientific research: Processing experimental observations for statistical analysis

- Supply chain optimization: Analyzing logistics data to improve efficiency

- IoT and sensor networks: Managing real-time data streams from connected devices

- Financial analysis: Processing transaction data for compliance and forecasting

The evolution from manual ledgers to electronic systems reduced processing time from weeks to milliseconds. This acceleration enables organizations to analyze billions of data points daily, supporting real-time decision-making and advanced analytics that were impossible with manual methods.

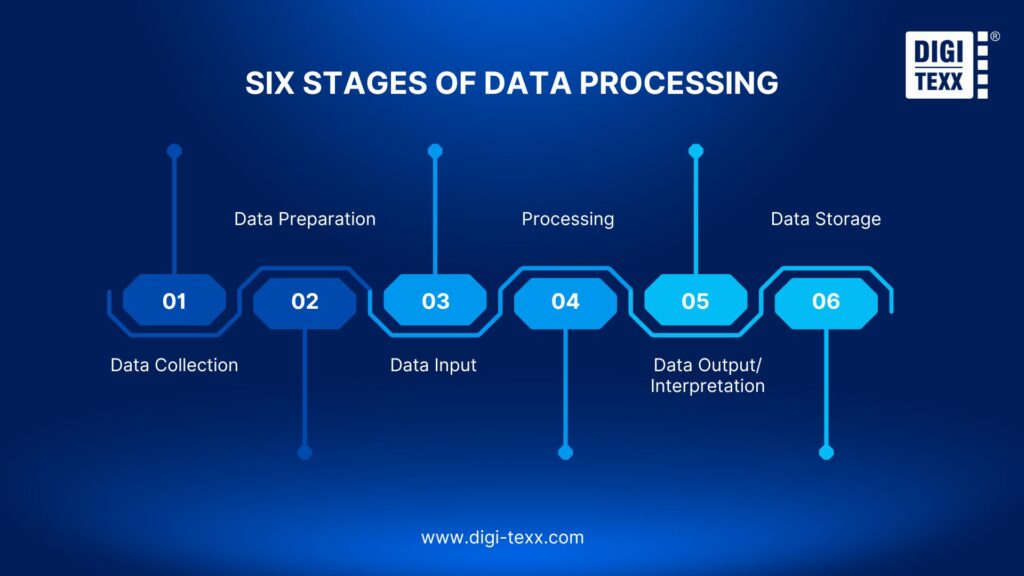

Six Stages Of Data Processing

Data processing transforms raw inputs into actionable intelligence through six interconnected stages. Each stage builds systematically from collection to storage while maintaining accuracy and usability.

1. Data Collection

In the initial phase of data collection, raw data is gathered and discovered from a variety of sources, including databases, sensors, and customer surveys. Making sure the information gathered is correct, comprehensive, and pertinent to the objectives of the analysis or processing is crucial. Selection bias, which occurs when a data collection method unintentionally favors particular outcomes or groups, can skew results and produce incorrect conclusions.

2. Data Preparation

In this stage, raw data is cleaned, sorted, and enhanced. Data enrichment may also be applied by adding relevant external data, ensuring the dataset is comprehensive and of high quality.

3. Data Input

Data input is the next step. This step involves feeding the cleaned and prepared data into a processing system, which could be software or an algorithm made for a particular kind of data or analysis objective. Data can be entered into the processing system using a variety of techniques, including manual entry, data import from outside sources, and automatic data capture.

4. Processing

The input data is changed, examined, and arranged during the data processing phase to yield pertinent information. The data may be processed using a variety of methods, such as filtering, sorting, aggregation, or classification. The intended result or insights from the data determine which methods are used.

5. Data Output/Interpretation

Understandably presenting the processed data is the focus of the data output and interpretation stage. This could entail creating reports, graphs, or visualizations that aid in decision-making by demystifying intricate data patterns. In order to derive useful insights and knowledge, the output data should also be interpreted and examined.

6. Data Storage

The processed data is then safely saved in databases or data warehouses for later use, analysis, or retrieval during the data storage stage. While preserving data security and privacy, appropriate storage guarantees data longevity, availability, and accessibility.

>>> See more:

- Best Offshore Data Annotation Service Providers in 2026

- An Overview of Document Processing Company: What You Need to Know

- Document Indexing Services For Efficient Search & Data Access

Why Is Data Processing Important?

Data processing transforms raw information into strategic assets that drive business growth. Organizations that implement it effectively gain competitive advantages through faster decisions, lower costs, and higher data quality.

- Improved decision-making: Processing converts raw metrics into actionable insights, revealing patterns and correlations that guide smarter strategy.

- Operational efficiency: Automation reduces processing time from days to minutes, handling larger data volumes without proportional staff increases.

- Data quality: Structured workflows reduce human error by 85-95%, with automated validation catching inconsistencies and duplicates that manual review misses.

- Security & compliance: Processing systems enforce access controls, encryption, and audit trails automatically, verifying adherence to GDPR, HIPAA, and other regulations.

- Advanced technology: Clean, structured data is the foundation for AI and machine learning, enabling predictive analytics, NLP, and computer vision applications.

- Cost reduction: Efficient processing cuts infrastructure costs by 30-50% through cloud scalability and reduced manual labor.

>>> See more:

- Outsourcing Data Management: Benefits, Services & Best Practices

- Invoice Processing Services – Outsourcing Data Entry

- Secure Data Annotation Services: Top Companies & How to Choose

Types Of Data Processing

Organizations employ different data processing methods based on their specific needs, timing requirements, and data volumes. Each approach offers distinct advantages for particular use cases, from handling massive datasets to providing instant responses. Understanding these 12 types helps businesses select the right processing strategy for their operations.

Commercial Data Processing

Commercial data processing focuses on managing data for business operations and decision-making. It handles transactional data such as sales, purchases, payments, customer information within CRM systems, and inventory tracking. This type of processing often involves data integration, where data from different sources is combined, and data mining to uncover useful patterns for strategic planning.

Scientific Data Processing

Commercial data processing services centers on managing data to support business operations and decision-making. Widely utilized in industries such as retail, banking, and logistics, it handles transactional data like sales, purchases, and payments, manages customer information within CRM systems, and tracks inventory and supply chains. By automating these processes and ensuring data accuracy, commercial data processing boosts operational efficiency and enables more effective strategic planning.

Batch Data Processing

Batch processing is perfect for non-time-sensitive tasks because it handles large amounts of data collectively at predefined times. By combining and processing data during off-peak hours, this technique helps businesses manage data effectively while reducing the impact on day-to-day operations.

Online Data Processing

Online processing makes it easier to process data interactively over a network, allowing for continuous input and output for immediate results. It is a crucial part of online services and e-commerce because it allows systems to respond to user requests instantly.

>>> See more:

- Ecommerce Photo Retouching Services | Boost Image Quality

- Fashion Photo Retouching: Key Techniques, Benefits, and Tips

- Healthcare Back-Office Support Services For Efficient Operations

Real-time Data Processing

For tasks that need to handle data as soon as it is received, real-time processing is crucial because it allows for instant processing and feedback. For applications where delays are unacceptable, this kind of processing is essential for making decisions and responding promptly.

Multiprocessing (Parallel Processing)

Using multiple processing units, or CPUs, to handle multiple tasks at once is known as multiprocessing or parallel processing. This method speeds up overall processing time by enabling more effective data processing, especially for complicated calculations that can be divided into smaller, concurrent tasks.

Manual Data Processing

Human intervention is necessary for the input, processing, and output of data in manual data processing, usually without the use of electronic devices. Although this time-consuming approach is prone to mistakes, it was widely used prior to the development of computerized systems.

Mechanical Data Processing

Before the digital age, mechanical data processing was a common technique for managing and processing data tasks using machines or equipment. This method involved the input, processing, and output of data using physical, mechanical devices.

Electronic Data Processing

Computers and digital technology are used in electronic data processing to accurately and efficiently process, store, and transmit data. Fast processing speeds, large storage capacities, and simple data retrieval are made possible by this contemporary method of data handling.

>>> See more:

- 15 Best Data Labeling Service Providers In 2026

- Outsourced Order Processing Services – Fast & Accurate

- Business Process Automation Solutions: Benefits, Example & Service Company

Distributed Processing

To increase processing speed and dependability, distributed processing divides computational tasks among several computers or devices. By utilizing the combined strength of multiple systems, this technique manages complex processing jobs more effectively than a single computer could.

Cloud Computing

Through the internet, cloud computing provides scalable and flexible computing resources like servers, storage, and databases. This model relieves users of the responsibility of maintaining physical infrastructure by allowing them to access and use computing resources as needed.

Automatic Data Processing

By automating repetitive tasks with software, automatic data processing lowers the need for human input and boosts operational effectiveness. The automatic data processing approach reduces human error, expedites repetitive tasks, and frees up staff for more strategic work.

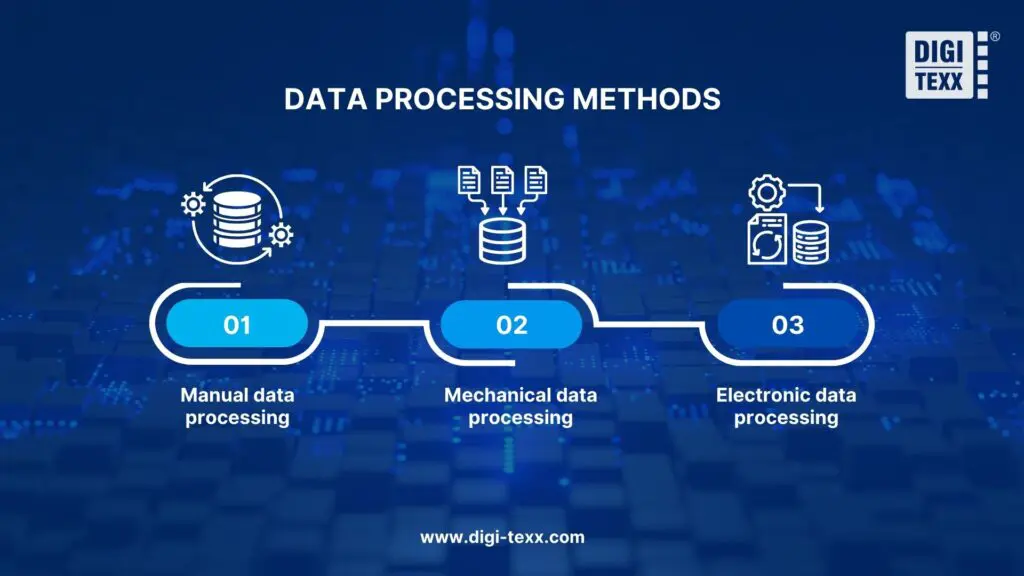

Data Processing Methods

Organizations choose data processing methods based on data volume, accuracy requirements, and available resources. The three approaches include manual, mechanical, and electronic, reflecting the shift from human-dependent processes to full automation, each with distinct strengths and limitations.

1. Manual data processing

Manual data processing relies on human effort for data entry, calculation, and analysis. Workers use paper forms, calculators, and basic tools to process information.

This method suits small data volumes and specialized tasks requiring human judgment. Manual processing remains common in small businesses, specific compliance scenarios, and situations where automation costs exceed benefits. The approach is time-consuming, error-prone, and difficult to scale.

2. Mechanical data processing

Mechanical data processing uses physical machines to handle data operations. Historical systems included typewriters, calculators, and punch card machines.

Modern mechanical processing includes barcode scanners, card readers, and automated sorting equipment. Organizations use mechanical methods for data capture and basic processing tasks. The approach offers faster processing than manual methods but lacks the flexibility of electronic systems.

3. Electronic data processing

Electronic data processing (EDP) uses computers and software to handle all processing stages. Systems automate collection, validation, calculation, storage, and output generation.

EDP handles complex operations at high speeds with minimal errors. Organizations process millions of records daily using databases, analytics platforms, and cloud services. Electronic processing enables advanced analytics, machine learning, and real-time decision-making. This method dominates modern data processing across all industries.

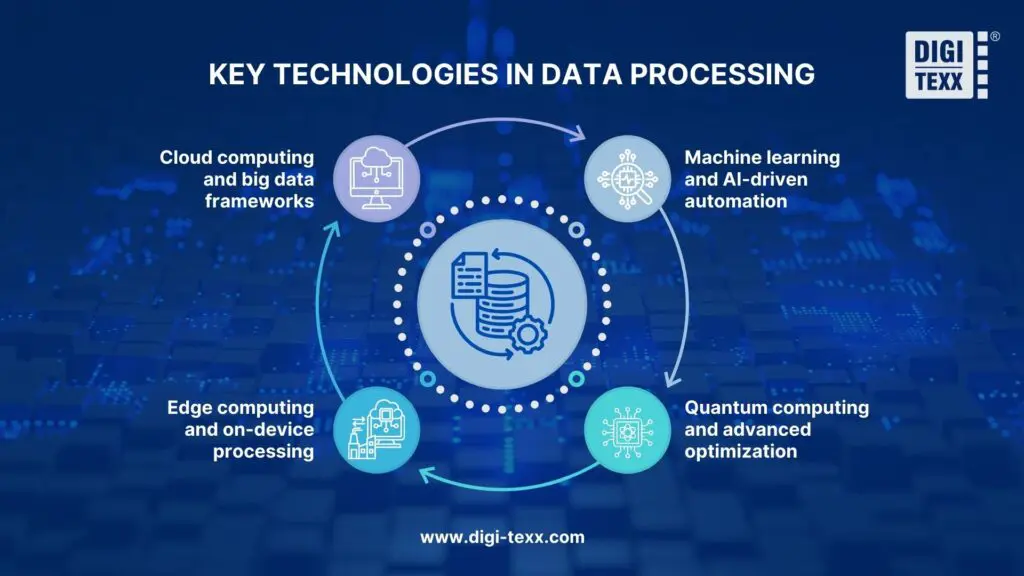

Key Technologies In Data Processing

Modern data processing relies on a diverse ecosystem of technologies that enable organizations to handle increasingly complex and voluminous data. From cloud-based platforms to emerging quantum systems, these technologies provide the foundation for efficient data operations. Understanding these key technologies helps organizations build robust processing infrastructures suited to their specific requirements.

1. Cloud Computing And Big Data Frameworks

Cloud platforms provide scalable infrastructure for data processing. Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform offer managed services for data storage, processing, and analytics.

Big data frameworks handle datasets exceeding traditional database capacities. Apache Hadoop processes petabytes of data across distributed clusters. Apache Spark executes in-memory computations 100 times faster than Hadoop for iterative algorithms. Organizations use these technologies to analyze customer behavior, optimize operations, and extract insights from massive datasets.

2. Machine Learning And AI-Driven Automation

Machine learning algorithms automate data analysis and pattern recognition. Systems learn from historical data to predict outcomes, classify information, and detect anomalies without explicit programming.

TensorFlow, PyTorch, and scikit-learn enable organizations to build predictive models. AutoML platforms automate model selection, training, and optimization. AI-driven systems process unstructured data including images, text, and audio. Organizations apply machine learning to customer segmentation, fraud detection, and demand forecasting.

3. Edge Computing And On-Device Processing

Edge computing processes data near its source rather than in centralized data centers. IoT devices, smartphones, and local servers analyze data on-site, reducing latency and bandwidth requirements.

This technology enables real-time responses in autonomous vehicles, smart manufacturing, and healthcare monitoring. Edge processing handles time-sensitive operations requiring millisecond response times. Organizations use edge computing to maintain operations during network disruptions and ensure data privacy by keeping sensitive information local.

4. Quantum Computing And Advanced Optimization

Quantum computers use quantum mechanics principles to solve complex optimization problems. These systems process certain calculations exponentially faster than classical computers.

Organizations explore quantum computing for drug discovery, financial modeling, and cryptography. Current quantum systems handle specific problem types, including optimization, simulation, and machine learning tasks. The technology remains experimental but promises revolutionary advances in processing speed for complex calculations.

>>> See more:

- Data Validation & Verification: Differences With Clear Examples

- Purchase Orders Processing: Steps, Types, Best Practices

- Professional E-commerce Data Entry Services by DIGI-TEXX

FAQs About Data Processing

What Do You Mean By Data Processing?

Data processing is the process of converting raw data into meaningful information through steps like collection, validation, organization, and analysis.

What Are The 4 Methods Of Data Processing?

The 4 primary methods of data processing are:

- Manual Data Processing: Human operators manually input, process, and output data without electronic assistance. While flexible and suitable for small datasets, this method is time-consuming and prone to human error.

- Batch Processing: Large volumes of data are collected and processed together at scheduled intervals during off-peak hours. This method is cost-effective for non-urgent tasks and reduces system strain on business operations.

- Online Processing: Data is processed interactively in real-time as users input information. This method is essential for e-commerce platforms, banking systems, and customer-facing applications requiring immediate responses.

- Real-time Processing: Data is processed instantly as soon as it arrives from various sources such as sensors, IoT devices, or transaction systems. This method is critical for applications requiring immediate action, such as fraud detection, emergency alerts, and autonomous systems.

What Are The Best Tools For Data Processing?

Several industry-leading tools are widely used for data processing across different organizational needs:

- Apache Hadoop: A distributed computing framework ideal for processing large-scale datasets across multiple servers, particularly suited for batch processing and big data applications.

- Apache Spark: An advanced in-memory processing engine that offers faster processing speeds than Hadoop, supporting real-time and batch processing, machine learning, and graph processing.

- Python (Pandas, NumPy): Popular programming libraries for data manipulation, analysis, and numerical computing, favored by data scientists for their simplicity and extensive functionality.

- SQL Databases: Relational database management systems like MySQL, PostgreSQL, and Oracle enable efficient querying, sorting, and aggregation of structured data.

- Microsoft Excel: A widely-used spreadsheet tool suitable for small to medium-sized data processing tasks, data analysis, and creating visual reports.

What Are Examples Of Data Processing?

Here are examples of data processing:

- Stock market operations: Trading platforms match buy and sell orders, update prices in real-time, and log transactions to maintain market transparency.

- Manufacturing quality assurance: Sensors collect production data, which algorithms analyze to detect defects and ensure product quality.

- Home automation systems: Smart devices process sensor data and user inputs to control thermostats, lights, and security systems automatically.

- Patient information management: Healthcare facilities use digital records to store medical history, test results, and treatment plans for efficient patient care.

Data processing is no longer optional, it’s essential for competitive success. Organizations that implement effective data processing strategies gain faster decision-making, improved efficiency, better data quality, and a foundation for advanced AI and machine learning capabilities.

Whether you’re handling customer data, optimizing operations, or driving innovation, proper data processing transforms information into a strategic advantage. DIGI-TEXX specializes in comprehensive data analytics and processing solutions designed for organizations seeking to extract maximum value from their data assets. Our expert team combines industry best practices with cutting-edge technology to deliver results that drive measurable business impact.

If you have any questions or would like expert advice on data analytics services, please feel free to contact us using the information below.

DIGI-TEXX Contact Information:

🌐 Website: https://digi-texx.com/

📞 Hotline: +84 28 3715 5325

✉️ Email: [email protected]

🏢 Address:

- Headquarters: Anna Building, QTSC, Trung My Tay Ward

- Office 1: German House, 33 Le Duan, Saigon Ward

- Office 2: DIGI-TEXX Building, 477-479 An Duong Vuong, Binh Phu Ward

- Office 3: Innovation Solution Center, ISC Hau Giang, 198 19 Thang 8 street, Vi Tan Ward

Reference:

- Apache Software Foundation. (n.d.). Apache Hadoop. https://hadoop.apache.org/

- Apache Software Foundation. (n.d.). Apache Spark: Unified analytics engine for large-scale data processing. https://spark.apache.org/